🗞️ Google brought AI agents into the planet’s biggest application, Google Map.

Also, OpenAI published how their Responses API, “5-layer cake” framework of AI from Jensen Huang, Nemotron 3 Super, a 120B param open model

Read time: 9 min

📚 Browse past editions here.

( I publish this newletter daily. Noise-free, actionable, applied-AI developments only).

⚡In today’s Edition (13-March-2026):

🗞️ Google brought AI agents into the planet’s biggest application, Google Map.

🗞️ Today’s Sponsor: “The Backend for Agentic Development” just launched InsForge 2.0

🗞️ OpenAI published how their Responses API works by putting agents into a secure and managed computer space.

🗞️ Jensen Huang himself just wrote a great piece on Linkedin and also published a detailed blog.

🗞️ NVIDIA open-sourced Nemotron 3 Super, a 120B param open model built to fix the massive bottlenecks slowing down autonomous AI agents.

🗞️ There’s some software engineering hiring boom in some targeted areas and possibly due to the famous “Jevons Paradox.”

🗞️ Google brought AI agents into the planet’s biggest application, Google Map.

Launched today across the United States and India.

This fundamentally positions an AI model as the primary gatekeeper between everyday consumers and the local economy. Users can ask complex questions like how to find a phone charging spot without waiting in a long line.

Before this update, the app required exact names or basic categories to find places. Now, the system understands the context of a full sentence and cross-references it with a database of over 300M businesses and 500M user reviews. It filters these massive datasets in real time based on personal search history to present a custom itinerary.

This is a massive shift because a single algorithm now actively decides which local shops get seen by 2B users. It essentially turns a neutral map into an active recommendation engine that could dictate the financial success of physical stores.

📌 Whats new

So the old Google Maps just gave you a flat map with a basic blue line and a voice telling you to turn right in 500 feet. This meant you often had to guess exactly which lane you needed to be in or what the upcoming intersection actually looked like in the real world.

The new update introduces Immersive Navigation, which uses AI to completely change what you see on your screen while driving. It constantly analyzes fresh Street View imagery to build a vivid 3D view of the exact buildings, overpasses, and terrain right outside your car window.

Instead of just a basic blue line, the screen now highlights the actual lanes, crosswalks, and traffic lights ahead of you. This helps you perfectly prepare for a tricky lane change or a confusing highway merge way before you actually get there.

The voice guidance also sounds much more natural now, acting like a passenger who tells you to go past the current exit and take the next one. It even processes over 5M traffic updates every second to clearly explain why an alternate route might take longer but have less traffic.

When you finally reach your destination, the map will specifically highlight the front door of the building and point out the closest parking spots. This entire upgrade takes the stressful guesswork out of driving because your phone screen finally matches the physical layout of the road. It is incredibly helpful to have a navigation system that actually shows you the physical reality of the road, though relying so heavily on real-time 3D rendering might drain your phone battery much faster.

Ask Maps is currently rolling out to users in India and the US on both Android and iOS devices.

But the new 3D Immersive Navigation feature is only launching in the US today, and it will expand to other areas over the coming months. This massive AI upgrade is completely free for regular users right out of the box. Google wants to push this Gemini-powered tool to 2B people as quickly as possible to change how we all find local places.

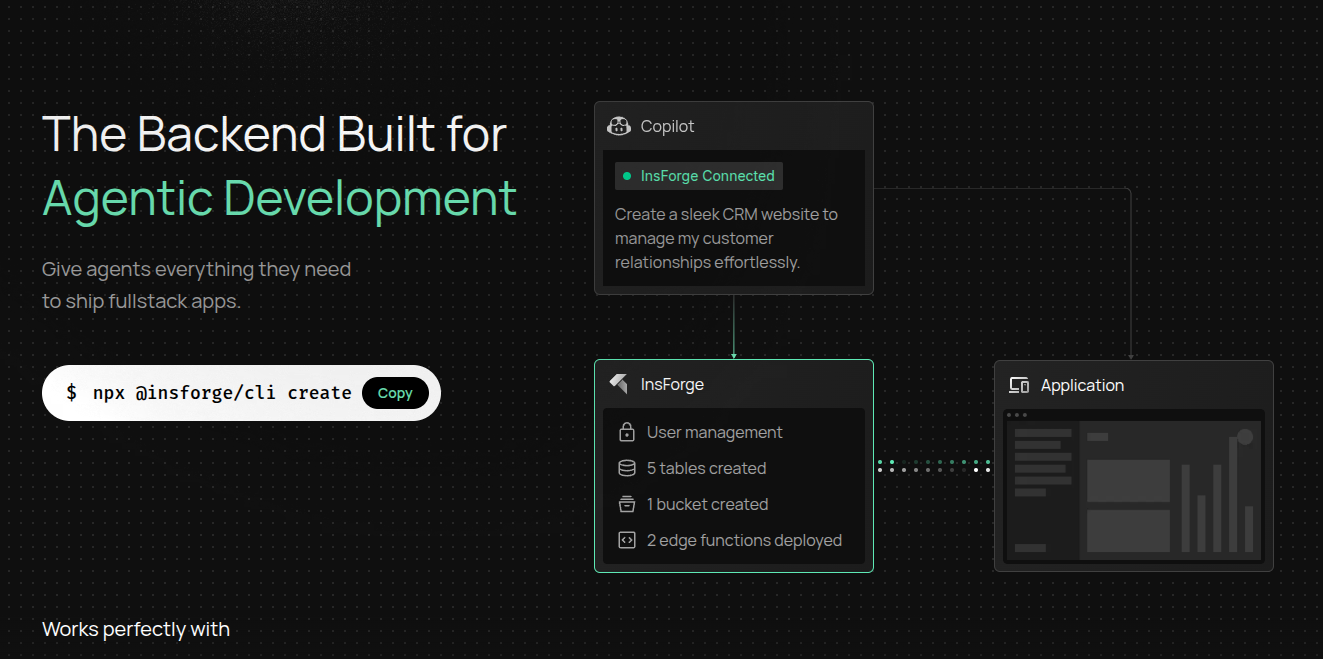

🗞️ Today’s Sponsor: “The Backend for Agentic Development” just launched InsForge 2.0

The bottleneck in software development is shifting from writing boilerplate code to simply defining accurate application logic.

BUT, AI coding agents still struggle to build complete applications because backends expect a human to read the docs or inspect a visual interface to understand how the database tables relate to each other.

Newly launched InsForge 2.0 is the backend specifically built for agentic engineering. It’s Github repo already has 3.6K+ Github stars ⭐️, do check out and they definitely deserve a star.

The platform gives agents everything they need to ship fullstack applications.

A dedicated Postgres database so you avoid any vendor lock-in. Instant deployments because the Vercel integration pushes your code live from 1 prompt.

Includes built-in real-time features using a WebSocket pipeline for event-driven messaging. Your AI agents connect directly to their remote server without requiring any local infrastructure setup.

Their CLI automatically downloads necessary skills so you can start building without manual configuration.

Their open-source backend (3.6K+ Github stars ⭐️) provides databases, authentication, storage, and edge functions through a semantic layer that agents easily understand.

The agent sets up the database schema, creates the React frontend, and deploys the whole thing live to the internet. It works so well because the Model Context Protocol layer is built directly into their platform from the very start.

Traditional backends bolt this layer on afterward, which forces the agent to waste time and compute guessing how the database is structured. InsForge uses a two-layer context design where the agent receives a global map first.

The agent then identifies the target table and receives the full local context including schema, row level security policies, and foreign keys. The backend tells the agent what to do next instead of making the agent run discovery queries blindly.

The performance difference is massive when looking at the MCPMark version 2 benchmarks. Their system achieved a 42.86% pass accuracy on Claude 3.5 Sonnet compared to just 33.33% for a standard Postgres setup. It also uses 59% fewer tokens because the agent acts with complete information in fewer turns.

Setting this up is very fast and requires zero local infrastructure.

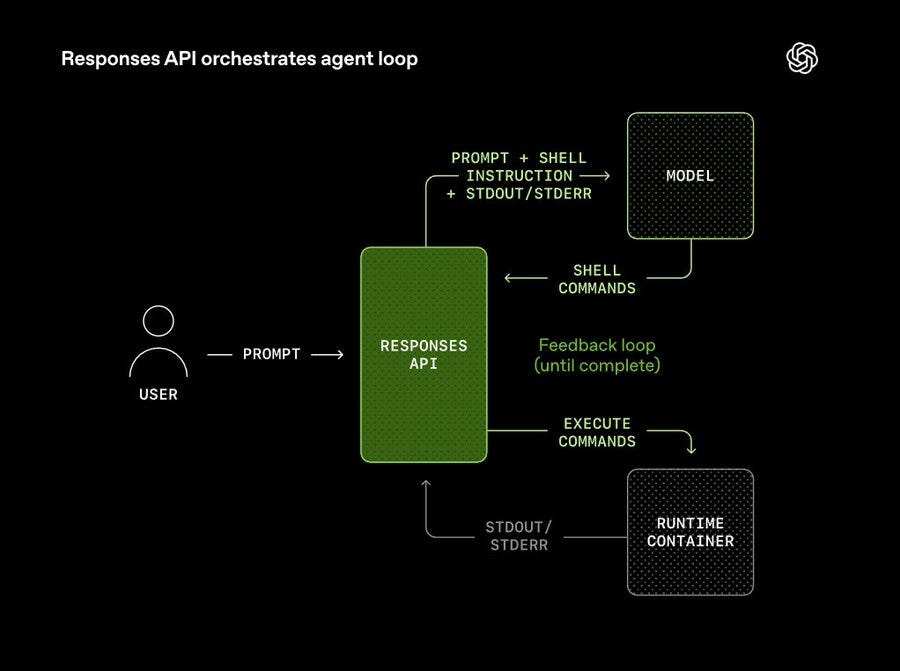

🗞️ OpenAI published how their Responses API works by putting agents into a secure and managed computer space.

The core idea is an agent loop where the model proposes a command, the platform runs it, and the results feed back into the model to decide the next action. They designed a shell tool that lets the model interact with the computer using standard command-line utilities.

Because raw terminal outputs can get incredibly long, the system enforces an output cap that keeps only the beginning and end of the text to save space. Long-running tasks also fill up the context window of the model very quickly.

To fix this problem, the API uses a built-in mechanism called compaction that shrinks older conversation history into a smaller format while saving the critical details. Compaction automatically squeezes a long, rambling conversation history into a tiny, secure summary so the model remembers the important details without running out of memory.

The runtime container serves as a safe working area where the model can organize files and query structured databases like SQLite rather than struggling to read massive raw spreadsheets. Since unrestricted internet access is dangerous, all outbound network traffic runs through a proxy that hides real passwords and only uses placeholder secrets.

Developers can also bundle repetitive workflow steps into reusable folders called skills so the model does not have to relearn how to do the same task repeatedly. This architecture shows how OpenAI handles the heavy lifting of state management and infrastructure so developers do not have to build it themselves.

🗞️ Jensen Huang himself just wrote a great piece on Linkedin and also published a detailed blog.

On the “5-layer cake” framework of AI.

“Because intelligence is produced in real time, the entire computing stack beneath it had to be reinvented — and when you look at AI industrially, it resolves into a five-layer stack: Energy → Chips → Infrastructure → Models → Applications.” That AI should be understood as essential infrastructure like electricity and the internet — spanning energy, chips, infrastructure, models, and applications.

The absolute bottom layer of this system is energy because creating artificial intelligence requires burning electricity constantly.

The “5-layer cake”

Sitting right above the power supply are the chips which act as the engines that actually turn that electricity into massive mathematical calculations.

You then need massive physical infrastructure like land, heavy cooling pipes, and complex networking cables to connect 10,000s of these chips together.

This giant hardware base runs the software models that can actually understand things like human language, complex biology, and basic physics.

The very top layer consists of the actual user applications like self-driving cars or medical software where businesses finally make money from all this setup.

Every successful program at the top puts a heavy load on every single layer beneath it all the way down to the original power plant.

🗞️ NVIDIA open-sourced Nemotron 3 Super, a 120B param open model built to fix the massive bottlenecks slowing down autonomous AI agents.

This generates 15x more text than normal, making traditional models way too expensive and slow to process the information. NVIDIA solves this by giving the model a 1 million token memory window, letting agents remember the entire workflow automatically.

The secret to fixing the speed issue is a hybrid architecture that makes everything run incredibly fast. Despite having 120B total parameters, it uses a clever trick to only turn on 12B of them for any specific task.

It mixes Mamba layers to handle memory highly efficiently with traditional Transformer layers to handle the actual deep reasoning. It also predicts multiple future words simultaneously, making the text generation 3x faster than older methods.

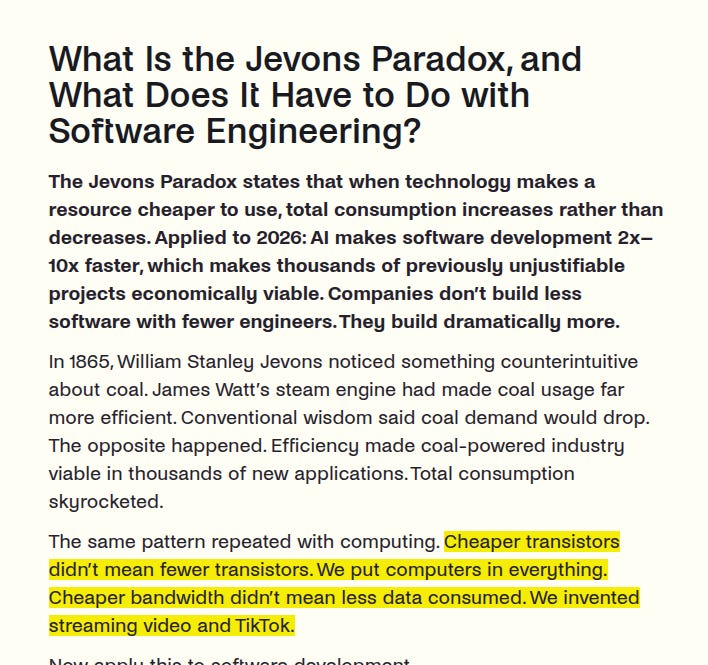

🗞️ There’s some software engineering hiring boom in some targeted areas and possibly due to the famous “Jevons Paradox.”

AI isn’t replacing software engineers; it’s expanding the market via the Jevons Paradox. As development becomes cheaper, demand for software explodes. While AI automates coding, human engineering judgment remains essential.

Success in 2026 now requires combining software fundamentals with AI fluency to manage tenfold productivity gains and complex software architectures.

“The same pattern repeated with computing. Cheaper transistors didn’t mean fewer transistors. We put computers in everything. Cheaper bandwidth didn’t mean less data consumed. We invented streaming video and TikTok.

Now apply this to software development.” When AI makes software 10X cheaper to build, companies don’t immediately fire people, they just build 10X more software!

While the AI writes the basic code, the demand for human engineers to review it and build large systems is higher than ever. “Germany tells the same story from the employer side. The Bitkom 2025 study (855 companies surveyed) found 109,000 unfilled IT positions. Down from 149,000 in 2023, but 79% of companies expect the shortage to worsen. And here’s the Jevons signal: 42% anticipate needing additional IT specialists specifically because of AI adoption.”

The growth in hiring is strictly targeted. Companies aren’t mass-hiring junior developers to write basic code or fill vague headcount quotas anymore. They are actively hunting for senior or “AI-literate engineers” who can use AI tools to multiply their output.

For context, recently Citadel Securities published this graph showing a strange phenomenon. Job postings for software engineers are actually seeing a massive spike.

That’s a wrap for today, see you all tomorrow.