🗞️ 1 million context window is now generally available for Claude Opus 4.6 and Claude Sonnet 4.6.

AWS Teams with Cerebras, Too big to fail risk from big AI labs, OpenClaw-RL: Train Any Agent Simply by Talking, AI video processing 19 times faster, Andrej Karpathy open-sourced “autoresearch”

Read time: 10 min

📚 Browse past editions here.

( I publish this newletter daily. Noise-free, actionable, applied-AI developments only).

⚡In today’s Edition (14-March-2026):

🗞️ 1 million context window is now generally available for Claude Opus 4.6 and Claude Sonnet 4.6.

🗞️ AWS Teams with Cerebras to Turbocharge AI Inference

🗞️ Too big to fail risk - What happens if OpenAI or Anthropic fail? Reuters published a piece.

🗞️ OpenClaw-RL: Train Any Agent Simply by Talking

🗞️ MIT, NVIDIA, UC Berkeley, Clarifai researchers made AI video processing 19 times faster by just skipping the pixels that never move

🗞️ Andrej Karpathy just open-sourced a tiny tool called “autoresearch” that lets AI automatically improve its own training code.

🗞️ 1 million context window is now generally available for Claude Opus 4.6 and Claude Sonnet 4.6.

They made a 1M token context window generally available for Claude Opus 4.6 and Sonnet 4.6.

They also got rid of the extra fees for long context in the API, so you pay the same price regardless of the length. You do not even need to use those beta headers in your API calls anymore.

The system reads up to 600 images in a single prompt. Previously, an AI compressed older conversation parts when memory ran out, forgetting early details.

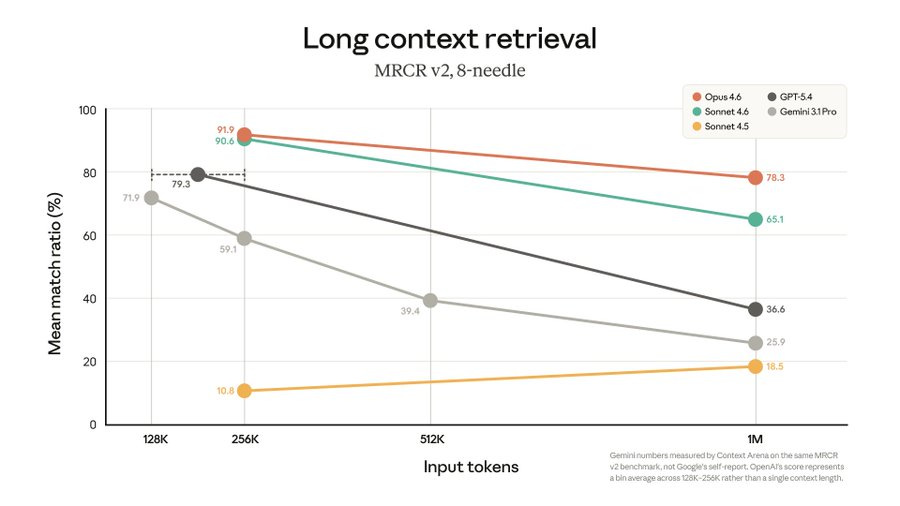

Opus 4.6 scores 78.3% on the MRCR v2 memory test at the full 1M length. This score signifies that even when processing a massive, one-million-token block of text, Opus 4.6 maintains the highest recall accuracy among frontier models for retrieving specific, hidden details.

Sonnet 4.6 scores 68.4% on GraphWalks BFS, measuring how well it logically connects scattered facts.

🗞️ Today’s Sponsor: A new hardware-free palm scan platfrom is setting a higher standard for online trust against deepfakes - VeryAI

Our feeds are constantly flooded with AI slop, bots and fake accounts. This is killing human-to-human interactions and trust. VeryAI is building to prevent this.

They just raised $10M to build a platform that verifies you are a real human using just your smartphone camera to scan your palm. This funding fixes a massive internet security problem.

Hackers and bots easily use artificial intelligence to create fake faces and voices. Traditional security methods like FaceID or two-factor authentication codes struggle to keep up with these deepfakes.

Hackers can easily scrape your photos from social media to create a fake version of your face.

The Core Concepts: VeryAI fixes this by looking at your palm instead of your face. Your palm print has unique structural features that are almost never posted publicly online.

This makes it incredibly difficult for anyone to find a picture of your palm to train an AI model for spoofing. The security numbers are a massive step up from what we currently use.

Scanning a single palm gives a false acceptance rate of 1 in 10M, which is 10 times more accurate than FaceID. If you scan both hands, that accuracy jumps to a near-perfect false acceptance rate of 1 in 100T.

The system works directly through any standard smartphone camera without special equipment. Privacy is handled by converting the scan into a randomized string of data rather than saving a picture of your hand.

The system generates an irreversible feature representation that cannot be reverted to a palm image or linked to your personal identity. I think shifting biometric verification away from public faces to private palm patterns is a highly practical defense against synthetic identity fraud.

It forces bad actors to find data that simply does not exist on the public internet.

🗞️ AWS Teams with Cerebras to Turbocharge AI Inference

Every AI request involves two steps: a prefill phase to read the prompt and a decode phase to generate the actual answer. Reading a prompt is a calculation-heavy task that runs efficiently on AWS Trainium chips.

Generating the response is much harder because the hardware must access the entire AI model for every single word it creates. Cerebras uses a wafer-scale engine that keeps the entire model on the chip, providing thousands of times more memory bandwidth than a standard GPU.

In this new setup, Trainium handles the initial prompt processing and then sends the stored context data to the Cerebras chip. The Cerebras hardware then takes over to generate the output at speeds reaching 3,000 tokens per second.

This specialized approach allows the system to produce 5x more high-speed tokens than traditional setups that try to do both tasks on one chip. It provides a solid performance boost for AI agents that typically generate 15x more text than regular conversational chatbots.

🗞️ Too big to fail risk - What happens if OpenAI or Anthropic fail? Reuters published a piece.

These labs are the primary customers for the $650B that tech giants are spending on new data centers and chips this year. Without their relentless demand for computing power, the expansion of new data centers would violently hit the brakes.

It would also leave huge power grid projects and physical infrastructure investments completely abandoned and useless. Banks and private credit lenders who poured roughly $900B into this space would face severe uncertainty and massive potential losses.

While a bigger tech company might swoop in to buy the failed labs for cheap, the overall value of the entire AI industry would instantly crash. Ultimately, the failure of just one of these major labs would not be a simple corporate bankruptcy. It would trigger a massive shockwave that drags down cloud providers, chipmakers, and global infrastructure projects all at once.

🗞️ OpenClaw-RL: Train Any Agent Simply by Talking

The huge deal here is that this method completely removes the traditional need for human workers to manually gather, review, and score massive datasets. AI Agents can now use their everyday mistakes to get smarter automatically.

Whenever a person replies to the digital assistant or corrects a mistake, the software treats that response as a direct learning signal. A background program reads these natural follow-up messages and extracts specific text hints about what the model should have done differently.

The software agent simply updates itself in real time during normal use by analyzing how people naturally interact with it. Every time a person corrects an agent or a software test fails, the system receives a valuable clue about how to improve.

----

Think about a student looking at their final grade and throwing the paper away without reading the teacher’s helpful notes. Current Reinforcement Learning systems do the exact same thing.

Current models throw this natural feedback away because they only care about whether the final outcome was a success or a failure. OpenClaw-RL fixes this by grabbing 2 specific signals from every single interaction.

First, it looks at evaluative signals to see if the action worked. If a user asks the same question again, they are probably unhappy. If a test passes, it is a success. These become simple numerical rewards using a Process Reward Model judge.

Second, it gathers directive signals to figure out how the action needs to change. User corrections and error logs offer direct guidance. These become word-level supervision using a technique called Hindsight-Guided On-Policy Distillation.

Personal chats, terminal commands, Graphical User Interface clicks, and software tasks all create these reaction signals. A single policy can learn from all of them at the same time.

It runs the training process in the background so the model never has to pause its normal tasks to learn. By treating standard deployment as a continuous learning environment, the model constantly adapts to individual user preferences without any manual data labeling.

🗞️ MIT, NVIDIA, UC Berkeley, Clarifai researchers made AI video processing 19 times faster by just skipping the pixels that never move

Attend Before Attention: Efficient and Scalable Video Understanding via Autoregressive Gazing

Current visual AI models struggle with long or high-quality videos because they waste time processing every single pixel equally. This new tool sits in front of the main AI and acts like a smart filter that only picks out the patches of the video where things are actually moving or changing.

It uses different zoom levels to grab fine details when necessary but completely ignores large boring areas like a blank wall. By testing this on standard video tasks, the authors found they could throw away up to 99% of the video data without losing the plot. This massive reduction speeds up the whole system by 19 times, finally allowing standard models to easily understand a full 5 minute video in stunning 4K resolution.

🗞️ Andrej Karpathy just open-sourced a tiny tool called “autoresearch” that lets AI automatically improve its own training code.

Andrej Karpathy just set the web on fire with auto research. The GIthub repo got 33.8K+ stars. Here

the human iterates on the prompt (.md)

the AI agent iterates on the training code (.py)

You just hand it a high level target like boosting model performance or cutting down what it costs to get a new customer. From there, it kicks off a cycle where it sketches out an experiment, tweaks the code, and runs a quick GPU test. It checks the numbers, keeps whatever worked best, and goes again. This happens on loop while you are out cold, so you wake up to real, data backed upgrades. It is basically a digital intern that churns through hundreds of trials and only hands you the victories.

You (the human) only write plain instructions in a Markdown file — things like “try bigger models” or “test new optimizers.” The AI agent does everything else: it edits the actual training code, runs training for exactly five minutes on one GPU, checks the score (validation loss), and keeps the better version or throws the bad one away.

It repeats this loop all night in its own git branch, running about 100 experiments while you sleep. Every run gets a 5-minute time budget, leveling the field for architectural changes.

The system evaluates success purely on which code achieves the lowest validation loss. This setup executes 12 experiments per hour, accumulating 100 complete runs overnight on 1GPU.

This is a huge deal because the fixed 5-minute timer makes every idea fair, no matter how wild. Suddenly one person with one GPU can run a full AI research lab overnight. You no longer code experiments — you just “program the programmer” with better prompts. Prompt quality is now the only thing that matters. AI research just became 100× faster and open to anyone.

That’s a wrap for today, see you all tomorrow.