A third era is now emerging, driven by AI agents capable of tackling large tasks independently over extended periods.

Claude’s 81K star repo & voice mode, GPT-5.4 leaks, and OpenAI’s War Dept tie-up. Plus: AgentConductor’s code gen, Qwen 3.5 on iPhone, and Alibaba leadership exits.

Read time: 9 min

📚 Browse past editions here.

( I publish this newletter daily. Noise-free, actionable, applied-AI developments only).

⚡In today’s Edition (03-March-2026):

🗞️ Brilliant post by CEO of Cursor - The third era of AI Agents

🗞️ Anthropic has quite a large open-source repository for Claude Skills with 81.2K+ Github stars 🌟

🗞️ ChatGPT-5.4 leaked news till now.

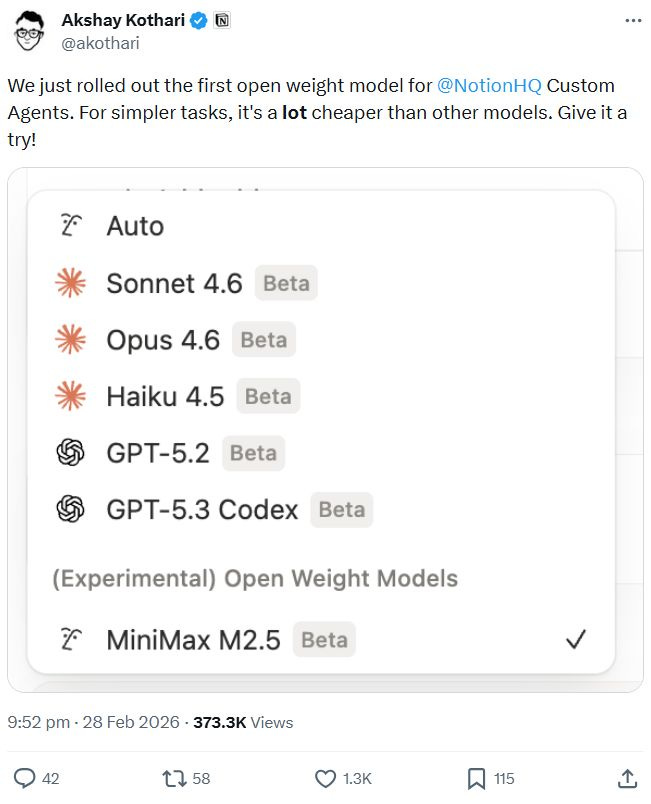

🗞️ MiniMax M2.5 becomes the very first open-weight model within Notion Custom Agents

🗞️ AgentConductor: Topology Evolution for Multi-Agent Competition-Level Code Generation

🗞️ Sam Altman just shared an internal update regarding OpenAI’s evolving partnership with the Department of War.

🗞️ Claude Code rolls out a voice mode capability.

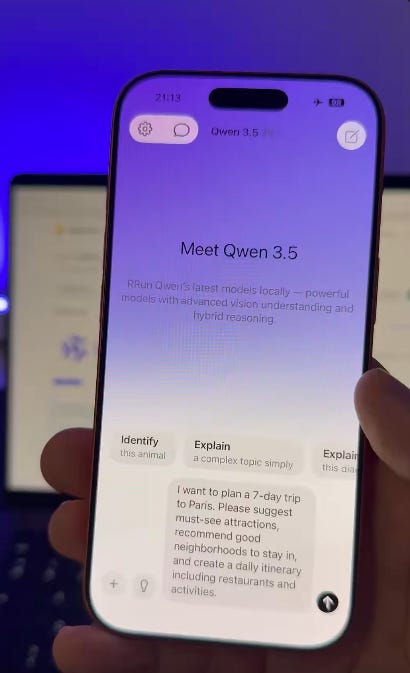

🗞️ A Twitter post goes viral showing Qwen 3.5 2B (6-bit) running on iPhone 17 Pro

🗞️ Alibaba Qwen’s Tech Lead Junyang Lin, 2 Other Researchers Step Down

🗞️ Brilliant post by CEO of Cursor - The third era of AI Agents

Your next software engineering teammate isn’t human.

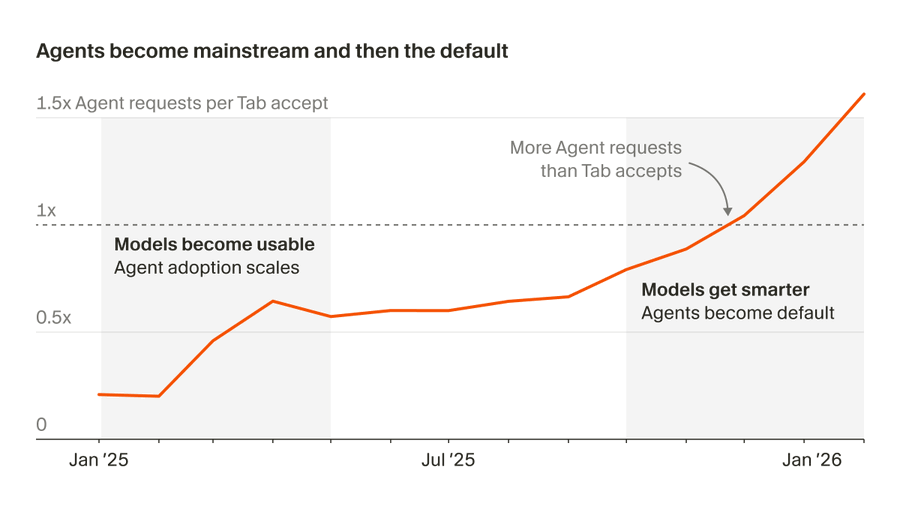

The first era of AI software development relied on Tab autocomplete to automate repetitive, single-keystroke coding tasks.

The second era introduced synchronous agents guided by developers through prompt-and-response loops, leading to agent users outnumbering Tab users two-to-one.

A third era is now emerging, driven by autonomous cloud AI agents capable of tackling large tasks independently over extended periods.

These cloud agents deliver easily reviewable artifacts like live previews, videos, and logs instead of traditional code diffs. The developer’s role is transitioning from writing code to defining problems, setting review criteria, and managing multiple simultaneous agents.

Internal metrics at Cursor show that autonomous cloud agents already generate 35% of their merged pull requests. Early adopters of this third-era workflow write almost no code, spending their time entirely on problem breakdown and artifact review. Scaling this approach requires resolving environmental issues, like flaky tests, that can interrupt autonomous agent runs at an industrial level.

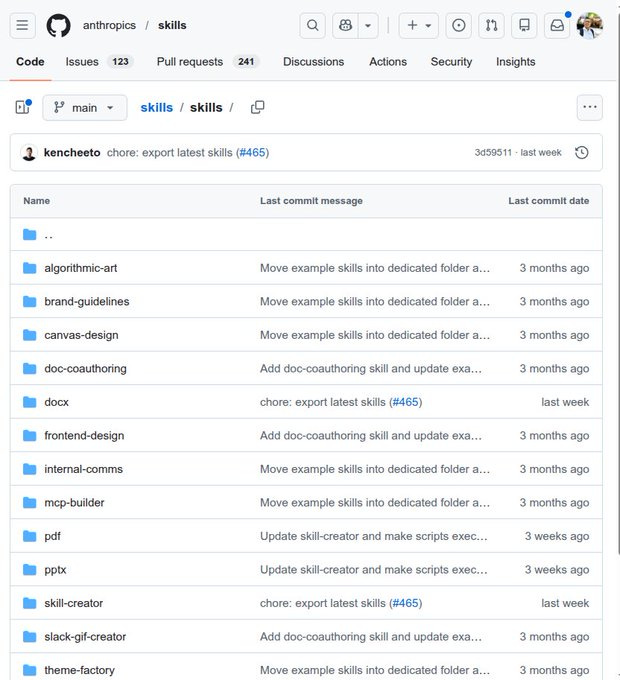

🗞️ Anthropic has quite a large open-source repository for Claude Skills with 81.2K+ Github stars 🌟

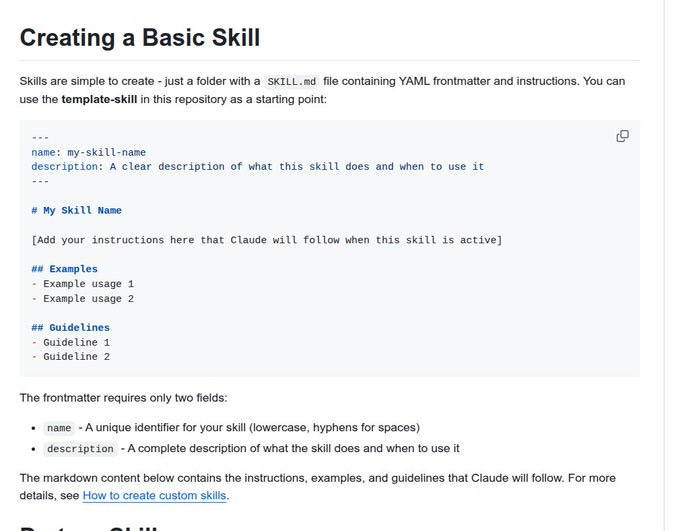

Instead of typing out long prompts every time you want to format a document, the system learns your workflow once and executes it automatically. There is also a specialized skill designed specifically to help you build brand new skills from scratch.

The architecture is highly efficient because each skill only consumes about 100 tokens just to read the basic metadata. This setup means the full instructions are only loaded into the active memory when the specific task actually requires them.

Your main context window stays entirely clear of unnecessary instructions until the exact moment they are needed. Developers install these tools using a single terminal command so they work seamlessly across the web interface and the API.

You build a specialized capability once and it becomes available across your entire software stack immediately. This shift toward dynamic memory loading provides exactly what the industry needs to move past basic chatbots into reliable software systems. It directly addresses the scaling bottlenecks of context window limits while standardizing how enterprises deploy AI across different departments.

New “Skills” are simple to create

🗞️ ChatGPT-5.4 leaked news till now.

a massive 2M token context window and persistent memory features

full-resolution image processing. means the model can directly process highly detailed files in PNG, JPEG, and WebP formats to prevent any loss of crucial visual data. Preserving the original image bytes helps the system properly read complex graphics like detailed architectural drawings or high-density screenshots without missing small text.

a new priority speed tier for faster responses.

OpenAI has accidentally revealed GPT-5.4 - twice - through pull requests in its public Codex GitHub repository. Both references were quickly scrubbed via force pushes and edits, but not before screenshots circulated and the community noticed.

Prediction markets on Manifold give GPT-5.4 a 55% chance of shipping before April 2026 and 74% before June. The competitive pressure is obvious. Claude Opus 4.6 launched with agent teams and a 1M context window. Anthropic’s Claude Code dominates the coding market with 54% share. DeepSeek V4 is training on Huawei hardware outside the NVIDIA ecosystem entirely. OpenAI cannot afford to slow down.

🗞️ MiniMax M2.5 becomes the very first open-weight model within Notion Custom Agents

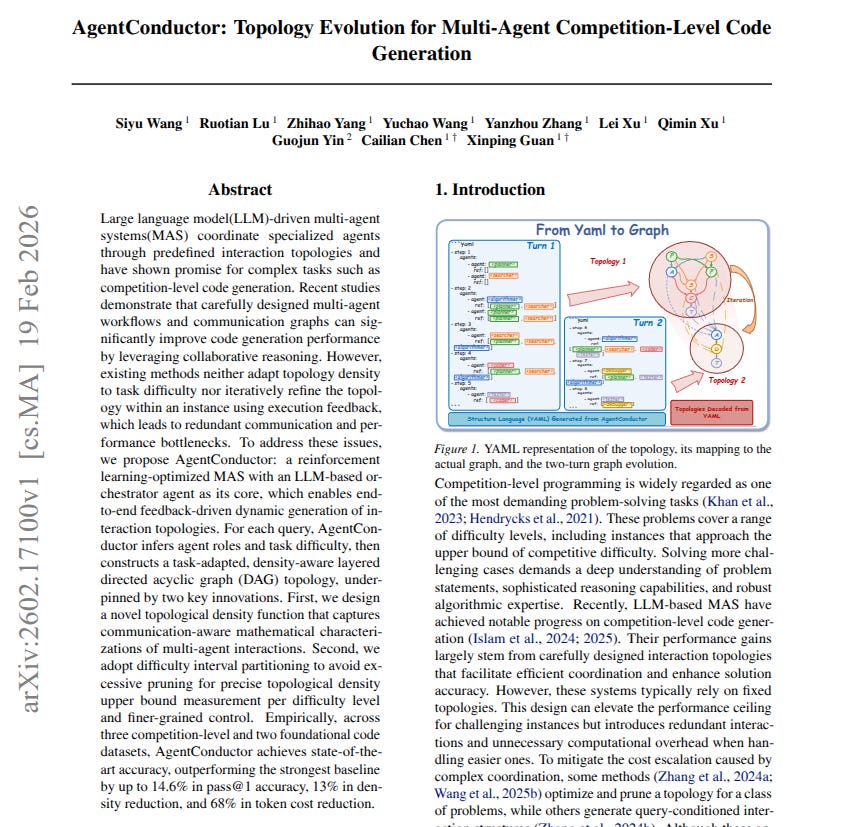

🗞️ New paper from top Chinese labs brings AgentConductor, a new framework dynamically adjusts multi-agent connections to solve complex programming challenges while using fewer tokens.

AgentConductor: Topology Evolution for Multi-Agent Competition-Level Code Generation

The big deal here is the shift from rigid workflows to fluid teamwork. Normal multi-agent systems use a fixed, hardcoded workflow for every single problem. If you have a team of 5 specialized AI agents, all five talk to each other in the exact same pattern whether they are printing a basic text line or solving a massive competitive programming challenge.

This wastes huge amounts of computing power on simple tasks and fails on complex tasks that actually require a different structure. AgentConductor fixes this by acting like a smart human project manager. It looks at the problem, judges the difficulty, and creates a custom communication graph just for that specific task. Easy tasks get a small, cheap team.

Hard tasks get a large, highly connected team. Even better, if the generated code fails to run, the manager reads the error message and actually rewrites the team workflow on the fly to try a new strategy. The big deal is that it drastically improves coding accuracy while cutting computing token costs by 68%, proving that AI teams need flexible, task-specific management rather than rigid, one-size-fits-all pipelines.

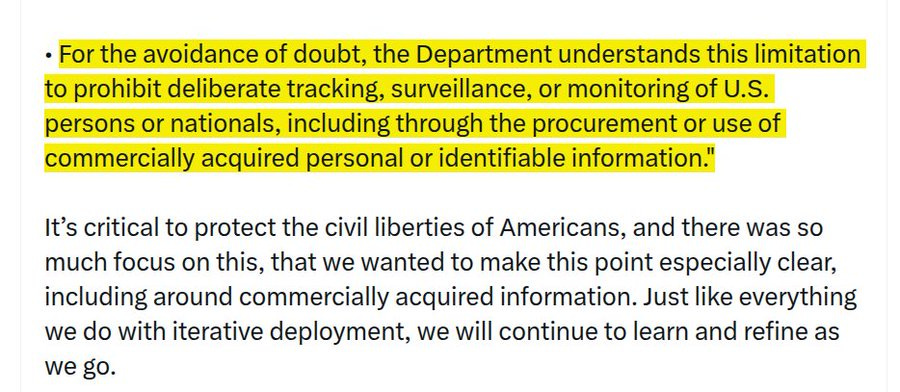

🗞️ Sam Altman just shared an internal update regarding OpenAI’s evolving partnership with the Department of War.

Explicitly bans the intentional use of AI for tracking or monitoring of U.S. persons or nationals.

One of the most important details is the ban on using “commercially acquired” personal data.

This essentially closes a common loophole that allows government agencies to buy data to bypass warrant requirements. For the context on this, under the 4th Amendment, govet agencies usually need a judge to sign a warrant before they can track your private digital life.

But over the last decade, a massive, legally gray loophole has opened up in the data broker industry. Thousands of private apps and companies constantly harvest your location, habits, and personal information to sell on the open market.

So here OpenAI is saying they will not let the government use its AI to analyze this purchased data. Even if the government legally bought your data from a broker, they cannot feed it into their models to conduct domestic surveillance.

Also intelligence agencies, like the NSA, are currently barred from using their services. Any future access for those agencies would require an entirely new contract modification and separate debate.

🗞️ Claude Code rolls out a voice mode capability.

Anthropic is rolling out Voice Mode for its Claude Code assistant to help developers code without using their hands.

Thariq Shihipar from the engineering team confirmed that 5% of users have access now, with a larger release coming soon. Typing /voice lets you speak commands directly, such as asking the system to clean up your authentication middleware.

It is currently unknown if there are specific limits on usage or if they partnered with companies like ElevenLabs to build it. This follows a similar update for their standard chatbot last May.

Claude Code is holding its own against competitors like Microsoft GitHub Copilot and Cursor, currently hitting $2.5 billion in run-rate revenue. Their user base has doubled since January, and the mobile app popularity grew after they publicly rejected using their technology for autonomous weapons or government surveillance.

🗞️ A Twitter post goes viral showing Qwen 3.5 2B (6-bit) running on iPhone 17 Pro

Here Qwen 3.5 2B (6-bit) running on iPhone 17 Pro, MLX-optimized. Outperforms models 4X its size with strong visual intelligence.

Powerful AI, now truly mobile. Opportunity for so many new products.

🗞️ Alibaba Qwen’s Tech Lead Junyang Lin, 2 Other Researchers Step Down

Their exit marks a major shift for one of the world’s most popular open-source AI families that has reached over 600M downloads.

Lin was the person who mostly talked to the public and shared their code, making him the human face of a project that rivals Meta’s Llama.

During his time at Alibaba, he helped develop multimodal models, which are AI systems that can understand pictures and sounds just as easily as they read text.

He also pushed the team to use mixture of experts technology, where a massive AI is broken into smaller groups of specialists to save on computing power.

This method ensures that the AI only uses the specific brain parts it needs for a task, which helps it run faster on less expensive hardware.

Lin was open about the fact that US labs have 10 to 100 times more computing power than labs in China. Because of this chip shortage, his team had to focus on clever software engineering and math to make their models perform at a world-class level.

The Qwen family has grown to include models for coding, math, and logic, with over 170,000 different versions created by the developer community.

That’s a wrap for today, see you all tomorrow.