🗞️ Alibaba released their open-source Qwen3.5-9B model, and it holds its own against OpenAI's 120B param gpt-oss system.

Anthropic sues gov, DeepSeek V4 nears, and LLM privacy decoded. Plus: Altman on OpenAI’s Pentagon deal, a massive Video Reasoning suite, and SWE-1.6’s coding debut.

Read time: 10 min

📚 Browse past editions here.

( I publish this newletter daily. Noise-free, actionable, applied-AI developments only).

⚡In today’s Edition (03-March-2026):

🗞️ Alibaba released their open-source Qwen3.5-9B model, and it holds its own against OpenAI’s 120B param gpt-oss system. Plus, you can run this thing on a standard laptop.

🗞️ Anthropic is now taking the government to court to fight the “supply chain risk” designation.

🗞️ FT: DeepSeek is preparing to launch its latest AI model V4 next week

🗞️ User Privacy and LLMs: An Analysis of Frontier Developers’ Privacy Policies

🗞️ Some key takeaways from Sam Altman’s Saturday night AMA on OpenAI’s Pentagon deal

🗞️ A Very Big Video Reasoning Suite

🗞️ Cognition is sharing an early look at their new AI coding model named SWE-1.6.

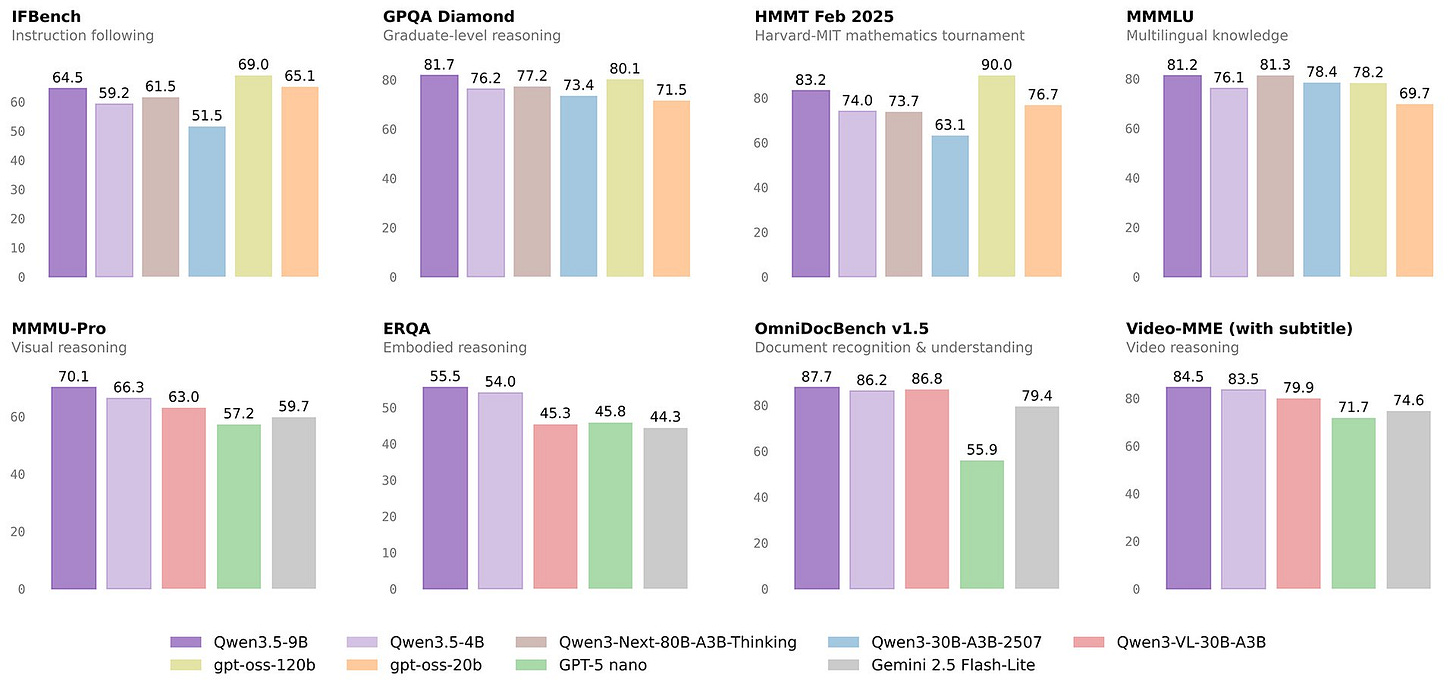

🗞️ Alibaba released their open-source Qwen3.5-9B model, and it holds its own against OpenAI’s 120B param gpt-oss system. Plus, you can run this thing on a standard laptop.

Despite political turmoil in the U.S. AI sector, in China, the AI advances are continuing apace without a hitch. Alibaba’s small Qwen3.5-9B family of models are released

Qwen3.5-0.8B & 2B: Two models, both ptimized for “tiny” and “fast” performance, intended for prototyping and deployment on edge devices where battery life is paramount.

Qwen3.5-4B: A strong multimodal base for lightweight agents, natively supporting a 262,144 token context window.

Qwen3.5-9B a compact reasoning model that outperforms the 13.5x larger U.S. rival OpenAI’s open soruce gpt-oss-120B on key third-party benchmarks including multilingual knowledge and graduate-level reasoning

The 9B model is particularly impressive because it performs just as well as open source models that are 120B in size.

That makes it 13 times smaller than the giants it is competing against, which means you get high-end performance without needing a massive data center.

It even manages to beat heavy hitters like Gemini 3 Flash and Claude 4.5 Sonnet on several specific tests.

For developers, the 4B version acts as a solid base for building lightweight agents that can handle tasks automatically.

The 0.8B and 2B versions are built specifically for edge devices, which are gadgets like smart watches or sensors that have very little memory.

The Architecture

Alibaba has shifted away from standard Transformer designs to a new Efficient Hybrid Architecture that powers the Qwen 3.5 Small series.

This new design combines Gated Delta Networks, which use a form of linear attention, with a sparse Mixture-of-Experts setup.

By using Gated Delta Networks, these models effectively break through the memory wall that usually slows down small AI models.

This architectural change allows the models to reach much higher speeds and significantly lower delay times when generating answers.

Unlike older models that simply attached a vision tool to a text model, Qwen 3.5 uses early fusion to be natively multimodal.

Because they were trained on multimodal tokens from the very beginning, these models can understand images and text as if they were the same thing.

This allows the 4B and 9B versions to perform complex tasks like reading tiny user interface elements or counting objects in a video.

These models now exhibit a level of visual intelligence that previously required systems 10 times their actual size.

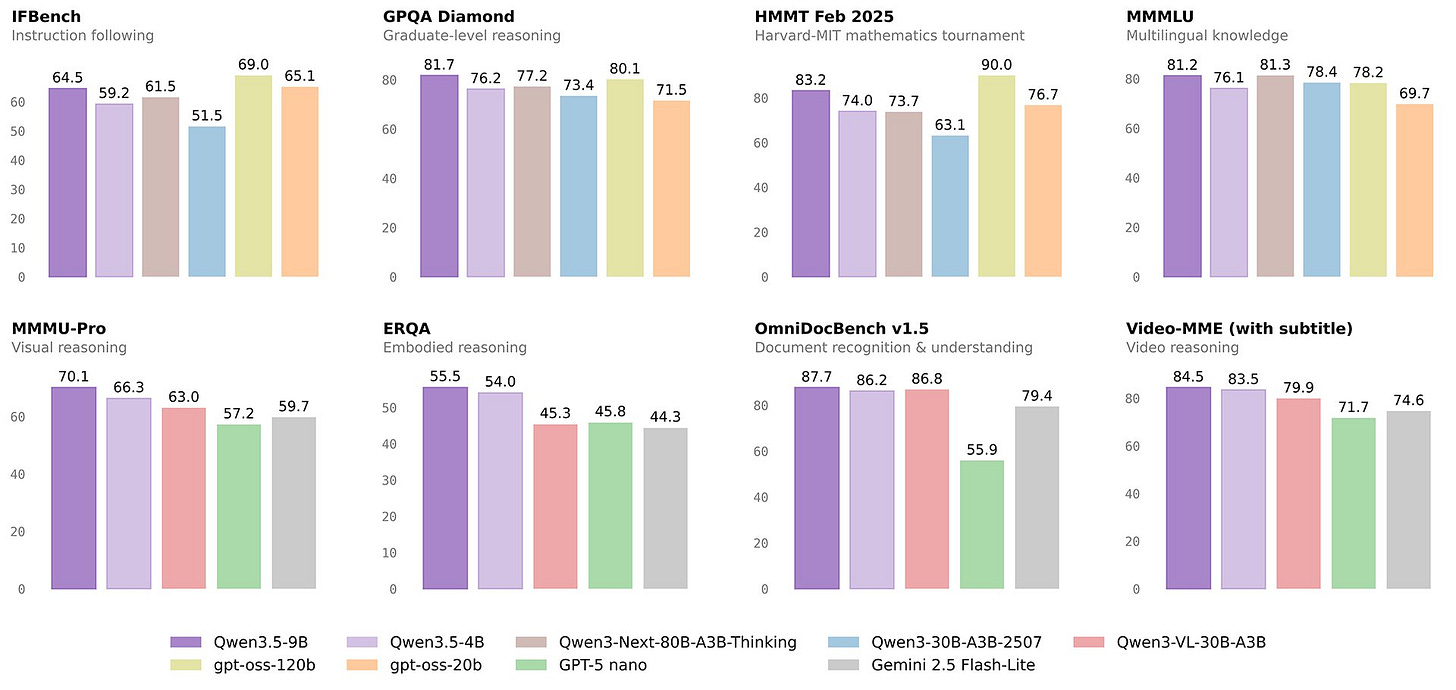

🗞️ Anthropic is now taking the government to court to fight the “supply chain risk” designation.

For the context, Anthropic wanted strict contractual limits to prevent its technology from being used for mass domestic surveillance or powering fully autonomous weapons. The Pentagon refused those terms and demanded the ability to use the models for any lawful purpose without specific restrictions.

Because Anthropic would not yield, the Secretary of Defense issued a mandate attempting to block any existing military contractor from doing business with Anthropic. A supply chain risk designation is a legal tool normally used to block vendors that pose deep security threats from accessing sensitive military data.

🗞️ FT: DeepSeek is preparing to launch its latest AI model V4 next week

By doing this, they are trying to prove they can run high-level AI without needing the top-tier chips from Nvidia that the US has restricted. This marks their first massive update since the R1 model was released in January-25.

🗞️ Stanford researchers checked 6 major AI companies and found they all use your chats to train models.

“User Privacy and LLMs: An Analysis of Frontier Developers’ Privacy Policies”

Users unknowingly hand over highly sensitive medical or personal details that become permanent parts of future AI brains. The problem with standard privacy rules is that they scatter important details across multiple files so people cannot find them.

The researchers at Stanford HAI examined 28 privacy documents across these six companies not just the main privacy policy, but every linked subpolicy, FAQ, and guidance page accessible from the chat interfaces. They evaluated all of them against the California Consumer Privacy Act, the most comprehensive privacy law in the United States.

The results are worse than you think. Every single company collects your chat data and feeds it back into model training by default. Some retain your conversations indefinitely. There is no expiration. No auto-delete. Your data just sits there, forever, feeding future versions of the model.

Some of these companies let human employees read your chat transcripts as part of the training process. Not anonymized summaries. Your actual conversations. But here’s where it gets genuinely dangerous.

In many cases these chats, get merged with everything else those companies already know about you. Your search history. Your purchase data. Your social media activity. Your uploaded files.

The researchers describe a realistic scenario that should make you pause: You ask an AI chatbot for heart-healthy dinner recipes. The model infers you may have a cardiovascular condition. That classification flows through the company’s broader ecosystem. You start seeing ads for medications. The information reaches insurance databases. The effects compound over time.

You shared a dinner question. The system built a health profile.

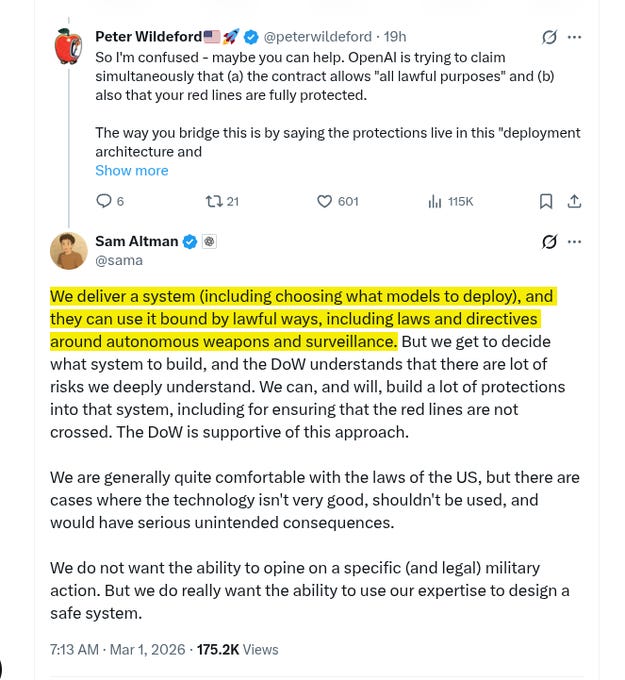

🗞️ Some key takeaways from Sam Altman’s Saturday night AMA on OpenAI’s Pentagon deal

OpenAI rushed the classified agreement to ease tensions between the government and the AI industry following Anthropic’s refusal of a DoW ultimatum. Altman acknowledged the optics of rushing might look bad, but the primary goal was to stabilize the situation.

A central theme of the AMA was the belief that democratically elected governments, rather than unelected private tech executives, should hold the power to make critical, ethical decisions regarding national defense (such as responding to nuclear threats).

Contractual Terms and “Redlines”: OpenAI established 3 flexible “redlines” for the technology’s use, which can evolve as the tech advances. OpenAI felt comfortable with the contract language and negotiated to ensure similar terms would be offered to other AI labs.

He speculated that Anthropic may have walked away because they demanded more operational control.

Instead of demanding full operational control, OpenAI established three flexible “redlines” for how their tech could be used, which can evolve as new risks emerge. A core philosophical difference highlighted by Altman is the belief that unelected tech executives should not have more power than their democratically elected government, meaning private companies should not be the ones deciding what is ethical in critical national security situations, such as responding to nuclear threats.

Crucial AI Defense Applications: Altman identified 2 major areas where AI can immediately counter significant national security threats: defending against major cyber attacks (such as threats to the electrical grid) and improving biosecurity (detecting and responding to novel pandemics).

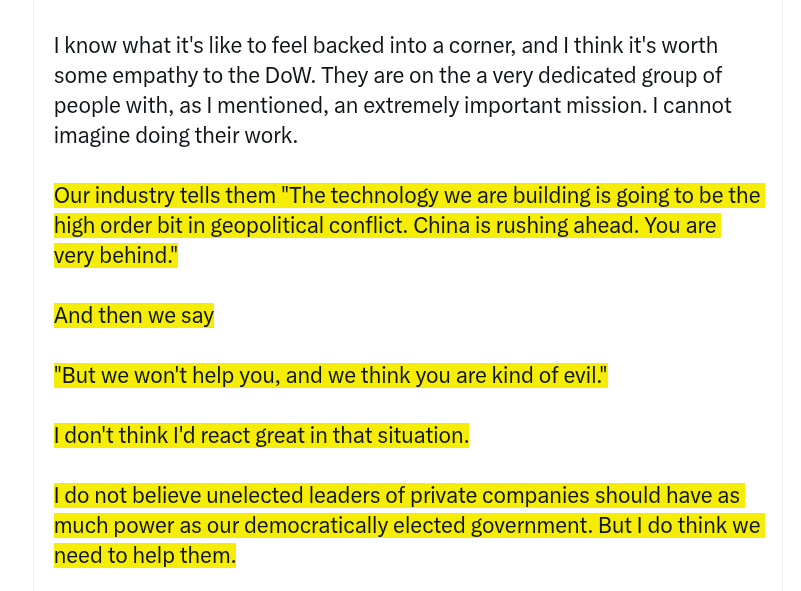

Sam Altman also pointed out a massive contradiction in how the AI industry treats the US government.

On one hand, tech leaders are constantly sounding the alarm to the Department of War. Their industry tells the government that artificial intelligence is going to be the absolute most important factor in future global conflicts, warning them that countries like China are building AI systems rapidly and the US is falling severely behind.

On the other hand, when the government actually asks these tech companies for help to catch up and defend the country, companies like Anthropic refuse. They essentially tell the military, “We will not let you use their technology because we think your goals are unethical.”

Altman is arguing that you cannot have it both ways. It is extremely frustrating and dangerous to tell the government they are losing a critical AI arms race against foreign adversaries, but then turn around and refuse to provide them with the very tools they need to protect the country.

That is why OpenAI felt compelled to step in and work with the government. They believe that if you warn the military about a major global threat, you have a responsibility to help them defend against it, rather than just calling them evil and walking away.

Sam Altman also clarified how OpenAI can legally promise the Department of War (DoW) the ability to use the AI for “all lawful purposes” while still strictly enforcing their own safety “red lines”.

Someone asked if the military attempts a lawful action but OpenAI’s safety systems block it, it seems like OpenAI would be breaching their contract. Altman explains that the solution lies in the engineering phase rather than daily oversight. Instead of reviewing and approving the military’s individual actions, OpenAI controls the fundamental capabilities of the system before it is deployed. They build safety protections directly into the architecture so the system physically cannot cross their red lines, an approach the DoW has agreed to.

Sam Altman mentioned previously that Anthropic likely wanted more “operational control.” This implies Anthropic wanted the power to govern or veto how the tool was used day-to-day.

OpenAI’s approach avoids this dynamic. Because they believe unelected tech leaders shouldn’t judge specific, legal military actions, they do not want to opine on individual DoW operations. Instead, they rely on their technical expertise to design a system that inherently refuses commands where the technology “isn’t very good” or could trigger “serious unintended consequences,” and then they let the government operate it within those hard-coded boundaries.

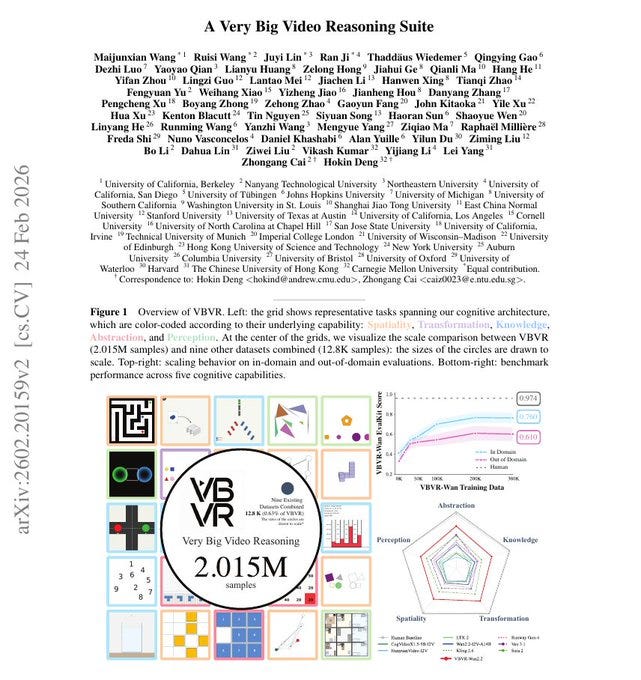

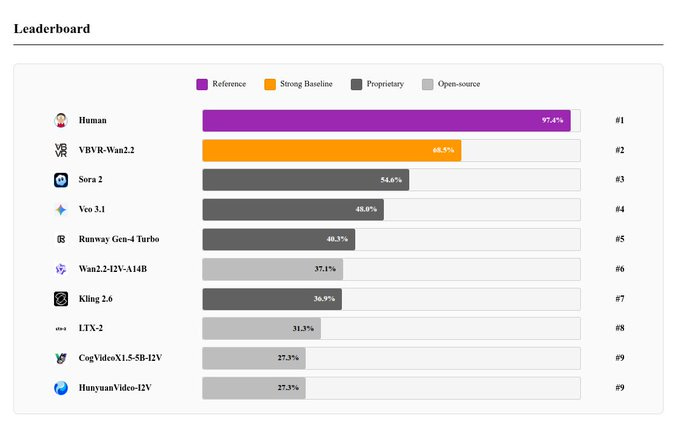

🗞️ 🤯 56 researchers from 32 universities across US, China, UK built an enormous video reasoning dataset to prove current AI models struggle with basic physical logic

“Very Big Video Reasoning Suite”

The problem is that the AI does not genuinely know how solid objects are supposed to behave. So Berkeley, Stanford, CMU, Harvard, Oxford, Columbia, NTU, Johns Hopkins, and 24 other institutions built this 2mn samples which makes it 1000 times larger than all existing collections combined.

Video generation systems usually focus on making things look pretty but they completely fail to understand spatial rules and causality. The team created a massive factory of visual tasks that tests how well models handle navigation, object manipulation, and logic.

Even the most advanced commercial systems only scored around 54% while human testers easily achieved over 97% accuracy. Training an open model on this specific data improved its reasoning skills but a massive gap still exists.

They believe “video reasoning is the next fundamental intelligence paradigm, after language reasoning, where spatiotemporal embodied world experiences could be more naturally captured.”

🗞️ Cognition is sharing an early look at their new AI coding model named SWE-1.6.

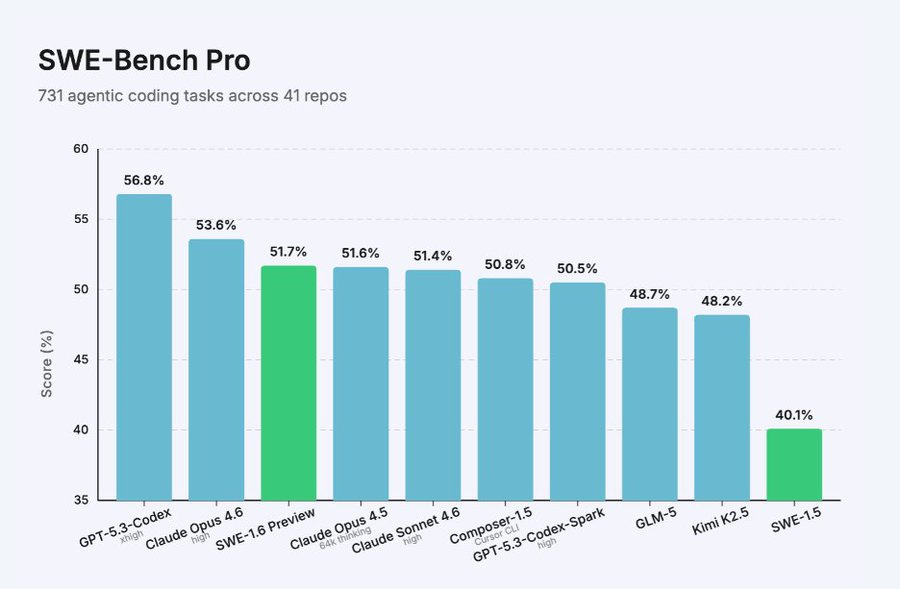

So SWE-1.6 is an “agentic” model, and can act like a software engineer that can explore entire codebases, run terminal commands, and solve complex, multi-step bugs autonomously. It hits a 51.7% score on the SWE-Bench test, securing an 11% boost over their last try.

Despite its increased intelligence, it still runs at 950 tokens per second. This is roughly 13x faster than other leading models like Claude 4.5 Sonnet, allowing it to complete complex tasks in seconds rather than minutes.

Improvements to their training stack make it 6x faster to train than it was just three months prior. Users can test it now in the Windsurf app.

That’s a wrap for today, see you all tomorrow.

“Instead of reviewing and approving the military’s individual actions, OpenAI controls the fundamental capabilities of the system before it is deployed. They build safety protections directly into the architecture so the system physically cannot cross their red lines, an approach the DoW has agreed to.” There has to be more to this than that. Perhaps abliteration is not possible, but why would DOW want an AI that it could not use? And how could Claude be helpful in Venezuela if it has the same innate safeguards?