🗞️ Anthropic launched Claude Code Security to scan your code repositories for bugs and suggest security patches.

From X-Humanoid’s robot platform to claims of massive AI bot attacks, 84% of people still haven't used AI, DeepMind’s new framework for tasking agents and the "Einstein test" for AGI.

Read time: 10 min

📚 Browse past editions here.

( I publish this newletter daily. Noise-free, actionable, applied-AI developments only).

⚡In today’s Edition (23-Feb-2026):

🗞️ Anthropic just launched Claude Code Security to scan your code repositories for bugs and suggest security patches.

🗞️ The Chinese robotics firm X-Humanoid launched Embodied TienKung 3.0, a general-purpose robot platform, that developers can build on.

🗞️ A viral report finds 84% of people have never used AI, and just 0.3% of users pay for premium services.

🚨 Anthropic is accusing some Chinese AI labs of creating 24,000 fake accounts and running 16 million prompts to boost their own models.

🗞️ GitHub just launched a new cross-agent memory system for Copilot that allows different AI tools to share and learn from your specific coding patterns over time.

🗞️ New Paper from Google DeepMind came out with a big plan for how we should actually give tasks to AI.

🗞️ Demis Hassabis’s “Einstein test” for defining AGI

🗞️ Anthropic launched Claude Code Security to scan your code repositories for bugs and suggest security patches.

Anthropic released Claude Code Security to scan entire codebases and suggest patches for complex vulnerabilities. Standard tools check software against known patterns, which completely misses deep logic flaws.

This new system reads your whole repository to understand how components interact and how data moves. It double-checks its work through a multi-stage review to filter false alarms while assigning severity scores.

Using the new Claude Opus 4.6 model, this setup identified over 500 vulnerabilities in major open-source projects. Teams review flagged issues and suggested fixes before approving changes.

Moving security analysis to contextual reasoning is a solid step for defensive engineering. Catching logic flaws automatically forces attackers to work harder.

“Validated findings appear in the Claude Code Security dashboard, where teams can review them, inspect the suggested patches, and approve fixes. Because these issues often involve nuances that are difficult to assess from source code alone, Claude also provides a confidence rating for each finding.”

🗞️ The Chinese robotics firm X-Humanoid launched Embodied TienKung 3.0, a general-purpose robot platform, that developers can build on.

X-Humanoid is also open sourcing a big set of building blocks, including the robot body, motion control framework, world model, embodied Vision Language Model, cross ontology VLA model, training toolchains, the RoboMIND dataset, and the ArtVIP simulation asset library.

It’s like a “base kit” that developers can build on, where the kit includes the robot hardware (body and joints) and the main robotics software pieces (motion control, perception, and planning), so you can just focus on their specific app instead of building the robot from scratch.

Humanoid robotics is running into 2 big problems right now.

1) Hardware interfaces are too closed off, so robots can’t be adapted quickly when the operating environment changes.

2) The software side is messy, with scattered tools and incompatible protocols, which forces teams to repeat the same research and development work and slows real commercial rollout and innovation.

Embodied Tien Kung 3.0 brings a fix for both, by pushing openness and interoperability so research institutes, universities, and companies can build, customize, and adapt solutions faster.

So Embodied Tien Kung 3.0 is a full size humanoid that pairs strong hardware, like high torque joints and whole body coordination, with on robot AI so it can stay stable, move precisely, and handle hard motions like clearing 1m obstacles, while still hitting millimeter level accuracy for factory style tasks.

Wise KaiWu is the software brain that runs a continuous “see, decide, act” loop, so the robot can understand a spoken task, break it into steps, avoid obstacles in real time, and reduce the need for constant human control.

It runs on X-Humanoid’s own Huisi Kaiwu embodied intelligence platform.

Developer can take these open pieces and get to a working humanoid demo much faster and cheaper, instead of spending months rebuilding the same low level robotics stack. Checkout more on it on their site

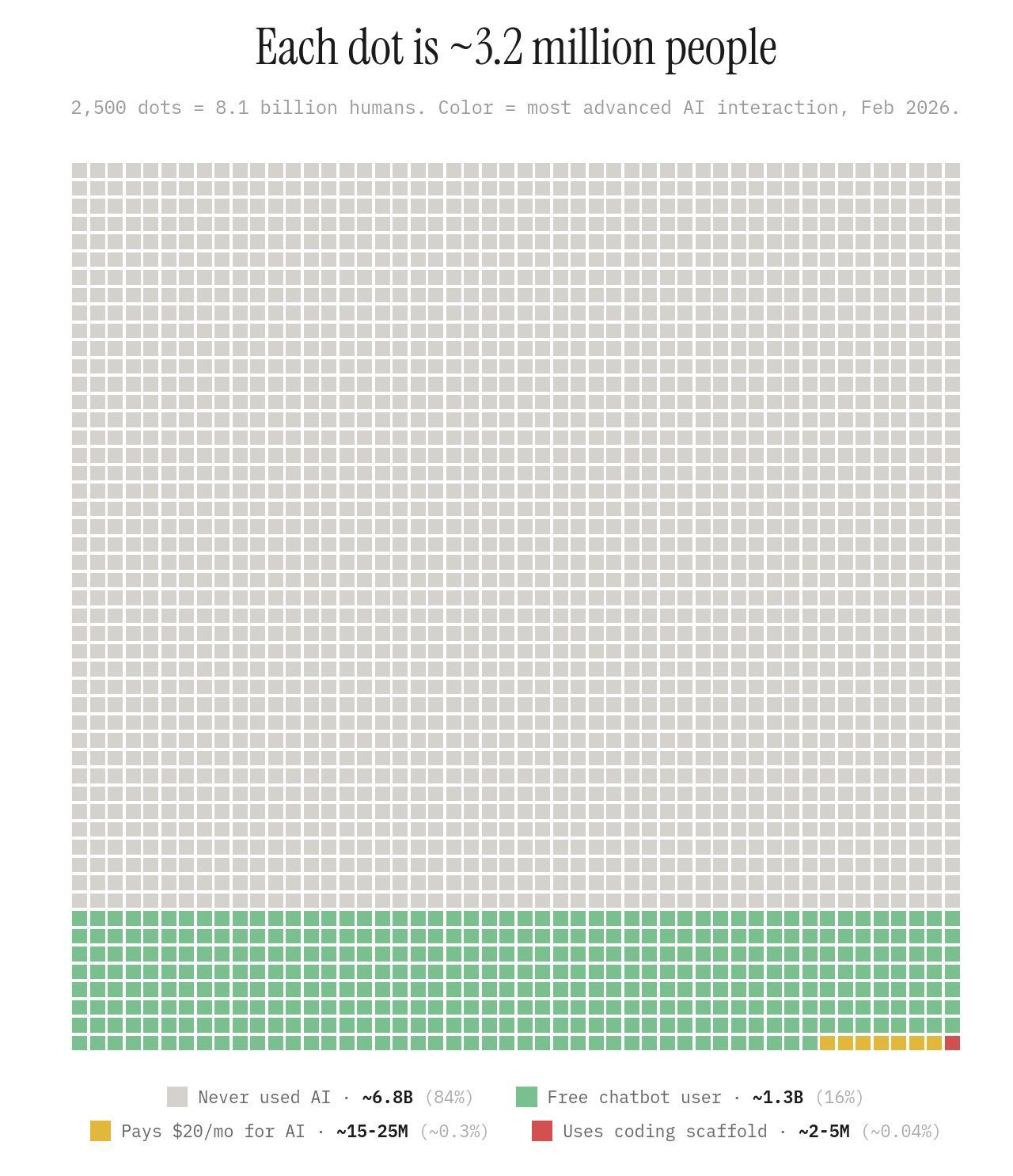

🗞️ A viral report finds 84% of people have never used AI, and just 0.3% of users pay for premium services.

Now, given the backdrop of this report, my take is that, while everyone keeps talking about an AI bubble, but we forget the fact that only 0.3% of the global population actually pays for a premium subscription.

For the vast majority of the real world it has not started yet.

6.8B people have yet to interact with even 1 free chatbot.

The massive grey chunk is the 6.8B people who have zero experience with AI.

The green dots represent 1.3B people using free versions of tools.

Only a small group of 15-25 mn people pays for subscriptions. We sit in that microscopic slice.

🚨 Anthropic is accusing some Chinese AI labs of creating 24,000 fake accounts and running 16 million prompts to boost their own models.

Anthropic says this extraction relies on distillation (which are technically legitimate methods), where a smaller AI learns by studying the high-quality answers of a smarter AI.

The Anthropic blog outlines how rival labs bypassed standard API limits to siphon training data for their own models.

Anthropic is claiming, to avoid getting blocked, these labs built sprawling proxy networks called hydra clusters. These clusters constantly shuffle requests across thousands of fake profiles to blend in with normal traffic.

They built hydra cluster architectures using commercial proxies to spread millions of requests across 24,000 fake accounts.

This setup prevented any single point of failure, meaning when Anthropic banned one account, the traffic simply shifted to another node. The blog claims DeepSeek used these networks for chain-of-thought elicitation, forcing Claude to write out its internal reasoning step-by-step.

This allowed them to generate massive datasets of reasoning traces to train their own rubric-based reward models for reinforcement learning. They also fed Claude politically sensitive prompts to generate censorship-safe responses, which helped them steer their own models away from restricted topics.

Anthropic also says some labs also focused heavily on extracting agentic reasoning and computer-use capabilities by varying their account types to avoid detection. And that other labs actually ran their extraction campaign live, shifting nearly half their traffic to a newly released Claude model within 24 hours to capture its latest capabilities.

To spot these attacks, Anthropic deployed behavioral fingerprinting and classifiers to catch the highly repetitive prompt structures that distinguish automated data scraping from normal human API usage.

The Wall Street Journal notes that these coordinated extraction efforts severely undermine the competitive advantage that export controls were designed to protect.

This situation highlights a fundamental flaw in API-based AI access, showing that without strict hardware-level verification, prompt-based data extraction is nearly impossible to stop completely.

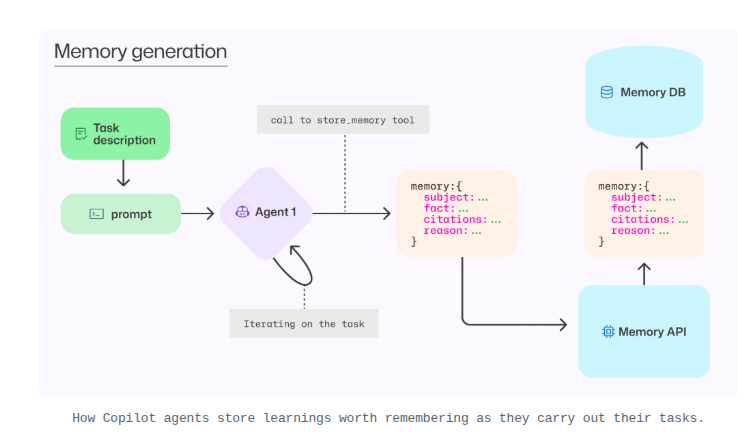

🗞️ GitHub just launched a new cross-agent memory system for Copilot that allows different AI tools to share and learn from your specific coding patterns over time.

Instead of starting every chat from scratch, this system lets the AI store “memories” about your project conventions, like how you name log files or handle database connections.

Any of your favorite agents on the GitHub platform (Copilot, Claude, Codex, c-c-c-combo breaker) can learn across your repo. The system uses a “store_memory” tool where an agent creates a snippet of information along with specific citations to your code to prove the fact is real.

To keep things accurate as your code changes, the agents perform just-in-time verification by checking those citations every time they retrieve a memory. If the cited code has changed or disappeared, the agent simply ignores the old memory or updates it with the new truth.

During testing, this memory feature boosted the success rate of the Copilot coding agent from 83% to 90% for merged pull requests. It also improved the quality of automated code reviews, with developers giving 2% more positive feedback on the AI’s suggestions.

The memory is strictly locked to your specific repository, so your private coding habits are never shared with other users or different projects.

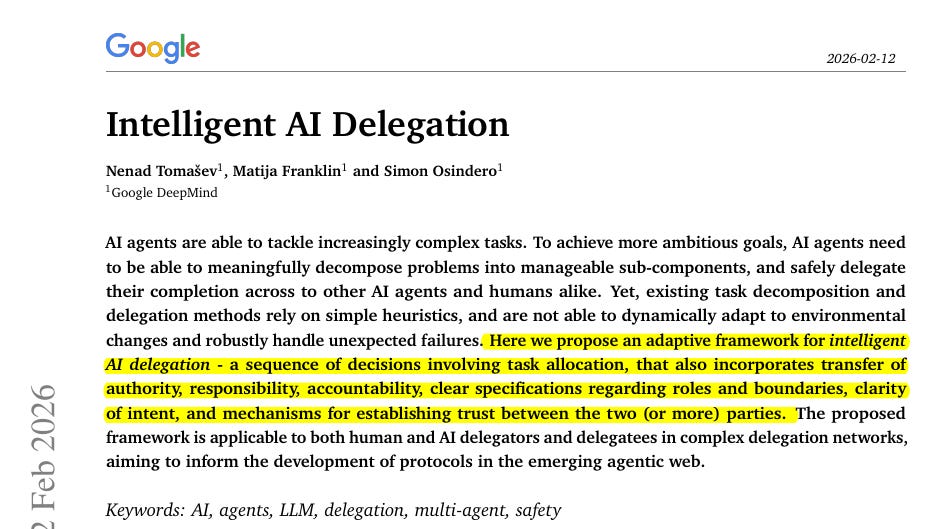

🗞️ New Paper from Google DeepMind came out with a big plan for how we should actually give tasks to AI.

It is not just about telling an AI to do something and hoping for the best. Instead, this framework looks at delegation as a string of choices where you figure out if you should even hand the task over, how to explain it, and how to check the work afterward.

Current systems rely on rigid rules that break when things fail unexpectedly. The researchers suggest building a dynamic market where agents bid on tasks using smart contracts.

This requires strict monitoring and cryptographic proofs to guarantee correct work without leaking private data.

Instead, this framework looks at delegation as a string of choices where you figure out if you should even hand the task over, how to explain it, and how to check the work afterward.

Current systems rely on rigid rules that break when things fail unexpectedly. The researchers suggest building a dynamic market where agents bid on tasks using smart contracts.

This requires strict monitoring and cryptographic proofs to guarantee correct work without leaking private data.

Instead of trusting a simple rating, agents will use verifiable digital certificates to prove their exact skills.

- Keeping things flexible when things change

This new system is built to be adaptive rather than stuck in its ways. It treats the handoff as a live process where authority and responsibility can shift around in real time. If the situation changes or something breaks, the framework helps manage that failure so the whole project does not go off the rails. It works for both humans giving tasks to AI and for when AI needs to handle things on its own.

- Finding the right amount of trust

One of the coolest parts is how it handles trust. They made formal trust models that look at how hard a task is and how well the AI has done in the past. This stops people from “over-delegating,” which is when you give an AI something it is not ready for. It also stops “under-delegating,” which happens when you do all the work yourself even though the AI could have handled it easily.

- Double checking the work

You cannot just take an AI’s word for it, so this framework has specific ways to validate the output. It sets up rules for when to accept an answer based on how confident the AI is. It also has backup plans ready to go if the AI fails. This is super important for real world jobs where trusting a machine blindly could cause a bunch of errors to pile up.

- When AI agents hire other AI agents

The framework also covers what happens when 1 AI agent hands a task to another AI agent. The system tracks who is actually accountable and makes sure the right authority is passed down the line so nothing gets lost in the network.

- Making sure the work actually fits

It is a step by step approach to make sure the AI’s contribution actually makes sense for the bigger goal. By treating this as a structured process, they are making it much safer for companies to use AI in their daily operations without worrying about constant mistakes.

🗞️ Demis Hassabis’s “Einstein test” for defining AGI

Demis Hassabis, the CEO of Google DeepMind, thinks we should define AGI based on a specific high bar. He mentioned during a talk that his view on AGI is still the same, seeing it as a setup that manages to show off every single mental skill that a person has.

He says train a model on all human knowledge but cut it off at 1911, then see if it can independently discover general relativity (as Einstein did by 1915);

if yes, it’s AGI.

That’s a wrap for today, see you all tomorrow.