Read time: 10 min

📚 Browse past editions here.

( I publish this newletter daily. Noise-free, actionable, applied-AI developments only).

⚡In today’s Edition (17-April-2026):

🗞️ Claude Opus 4.7 launched as ‘less powerful’ version of Mythos

🗞️ Tencent released HY-World 2.0, a 3D world model that turns text, images, photos, and video into editable scenes instead of flat clips.

🗞️ Perplexity just launched Personal Computer, a Mac feature that lets AI work across local files, native apps, and the browser.

🗞️ AI can boost performance at first and then leave people less able to think through problems on their own.

🗞️ A new paper shows that GitHub stars can be bought at scale, and that the distortion now bleeds into security.

🗞️ OpenAI just expanded Codex from a coding assistant into a desktop agent that can see, click, type, remember your habits, and keep work moving across apps and tools.

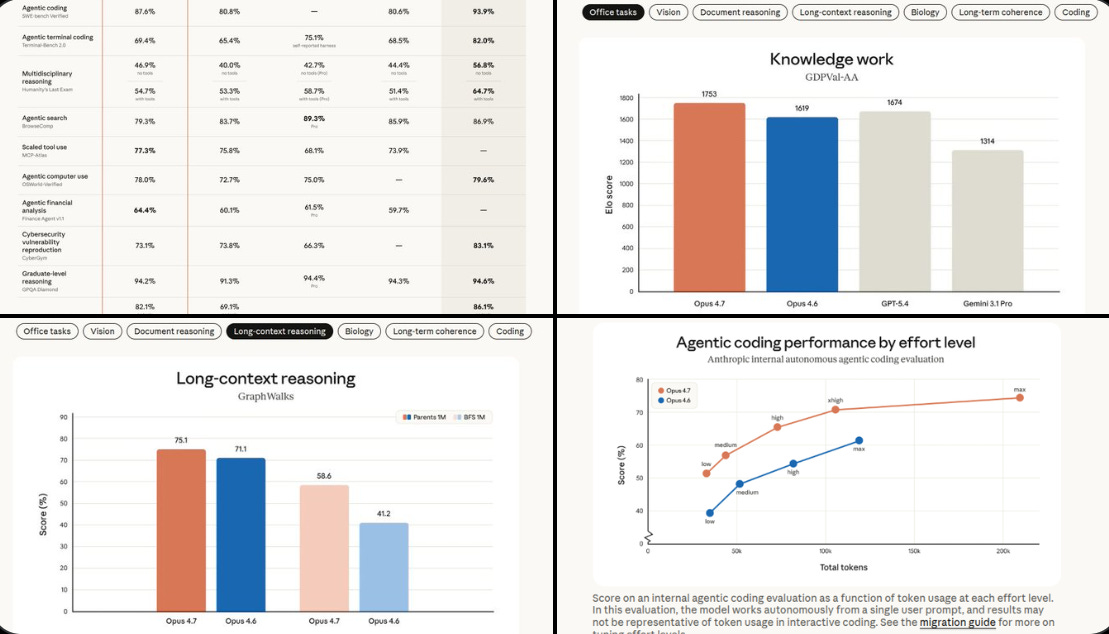

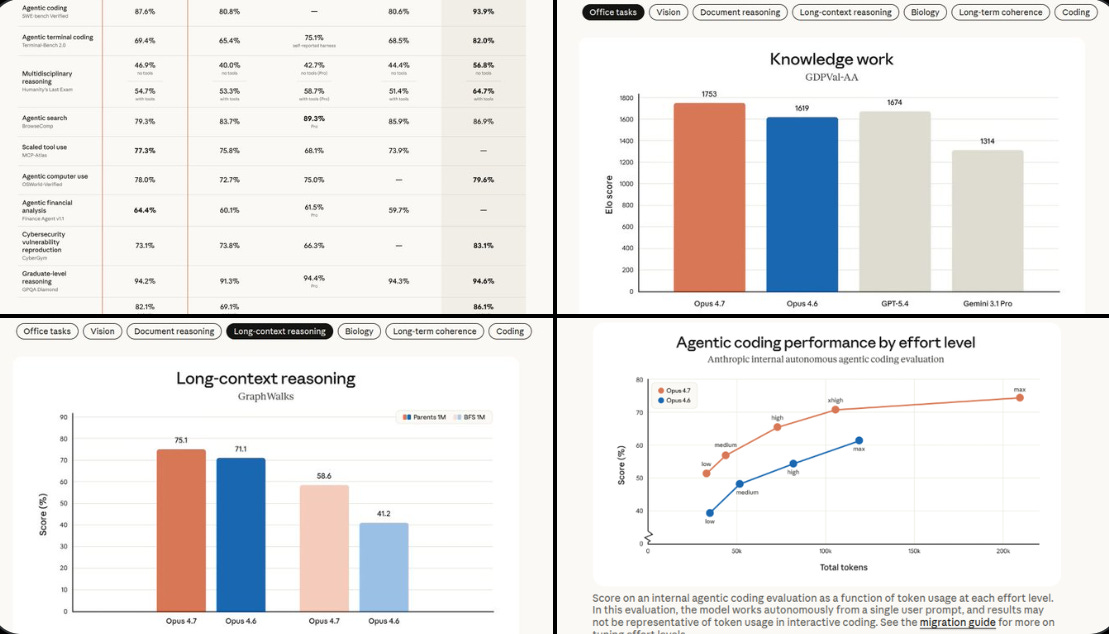

🗞️ Claude Opus 4.7 launched as ‘less powerful’ version of Mythos

It follows instructions more literally, so older prompts and harnesses may need re-tuning.

It supports higher-resolution vision up to 2,576px on the long edge, or about 3.75MP.

Improved file system-based memory for multi-session work. Mythos Preview is still described as much more broadly capable and better aligned.

Anthropic is using Opus 4.7 to test cybersecurity safeguards, including automatic blocking for prohibited or high-risk cyber requests.

Pricing stays at $5/M input tokens and $25/M output tokens.

Opus 4.7 introduces a new xhigh (“extra high”) effort level between high and max, giving users finer control over the tradeoff between reasoning and latency on hard problems. i.e. developers can make Claude spend more time on hard tasks, cap how many tokens it uses during long runs, and trigger a dedicated code review pass that looks for bugs and design issues.

The new /ultrareview slash command produces a dedicated review session that reads through changes and flags bugs and design issues that a careful reviewer would catch.

Token usage can increase because of the new tokenizer and because higher-effort runs can produce more output.

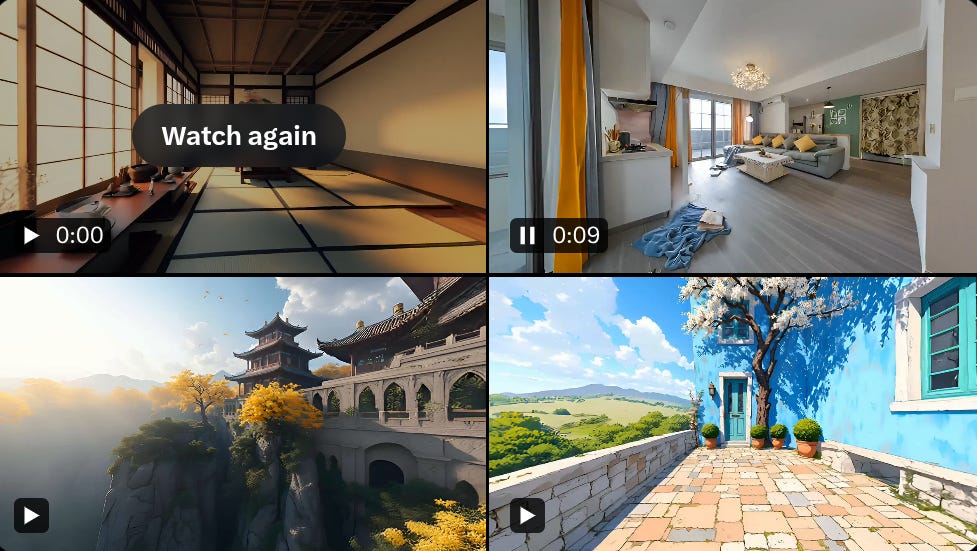

🗞️ Tencent released HY-World 2.0, a 3D world model that turns text, images, photos, and video into editable scenes instead of flat clips.

Most world models predict pixels, which looks fine from one view but often breaks when the camera moves or when you try to reuse the result as a real asset.

HY-World 2.0 tries to build the scene itself, recovering geometry, depth, camera pose, and renderable 3D assets, while WorldMirror 2.0 reconstructs photos or video in 1 feed-forward pass to produce a navigable digital twin.

What is happening here is that TencentHunyuan is trying to move world models from “generate the next frame” to “build the place itself,” because HY-World 2.0 takes text, a single image, multiview images, or video and aims to output persistent 3D assets such as meshes and Gaussian splats, which are small soft 3D points that can be rendered quickly from new views.

That is a big deal because a video world model can look interactive while still being only a stream of pixels, while a real 3D asset can be re-rendered from new angles, edited later, and imported into tools like Blender, Unity, Unreal, or Isaac Sim.

HY-World does this with a staged pipeline in which HY-Pano 2.0 makes a panorama, WorldNav plans a camera path, WorldStereo 2.0 expands the world, and WorldMirror 2.0 composes the final 3D scene.

Apply for access: https://3d.hunyuan.tencent.com/sceneTo3D

GitHub: https://github.com/Tencent-Hunyuan/HY-World-2.0

Hugging Face: https://huggingface.co/tencent/HY-World-2.0

Technical Report: https://3d-models.hunyuan.tencent.com/world/world2_0/HY_World_2_0.pdf

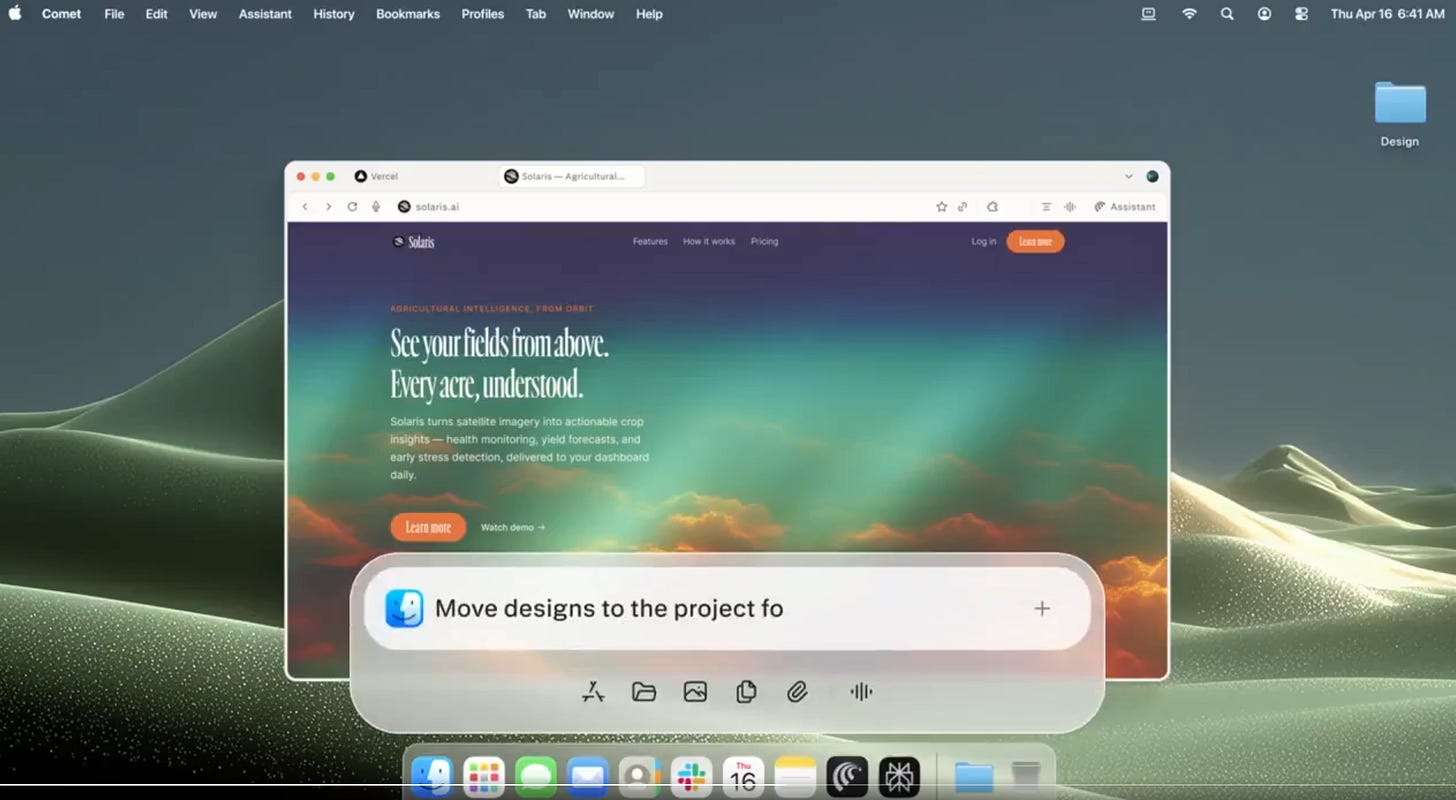

🗞️ Perplexity just launched Personal Computer, a Mac feature that lets AI work across local files, native apps, and the browser.

This is a super strong launch from Proplexity, as the real bottleneck in AI is no longer generating text. It is getting reliable access to the places where work actually lives.

Personal Computer acts like an orchestrator: it can connect to local folders, read and write files, use native Mac apps like Mail, Calendar, and iMessage, and work through the browser from the same layer.

Useful automation needs context from many places at once, not just the text you pasted into one prompt. Perplexity says when set up on a Mac mini this “Personal Computer” can keep it running 24/7, while an iPhone can start tasks remotely and approve 2FA.

Local file access helps you on the problems cloud copilots miss. Most useful work lives in half-finished docs, spreadsheets, inbox threads, and app state that never makes it into a clean API.

It’s a hybrid system: the heavy orchestration happens securely on Perplexity’s servers (using 20+ frontier models), while local file and app access runs on your Mac. This makes it far more powerful and contextual than a purely web-based AI.

Key difference from Perplexity Computer (the web/cloud version): Personal Computer is the superset. It does everything the cloud version can do plus direct local machine access. Set it up on a Mac mini and it becomes a true 24/7 agent that keeps working even when your laptop is closed.

🗞️ AI can boost performance at first and then leave people less able to think through problems on their own.

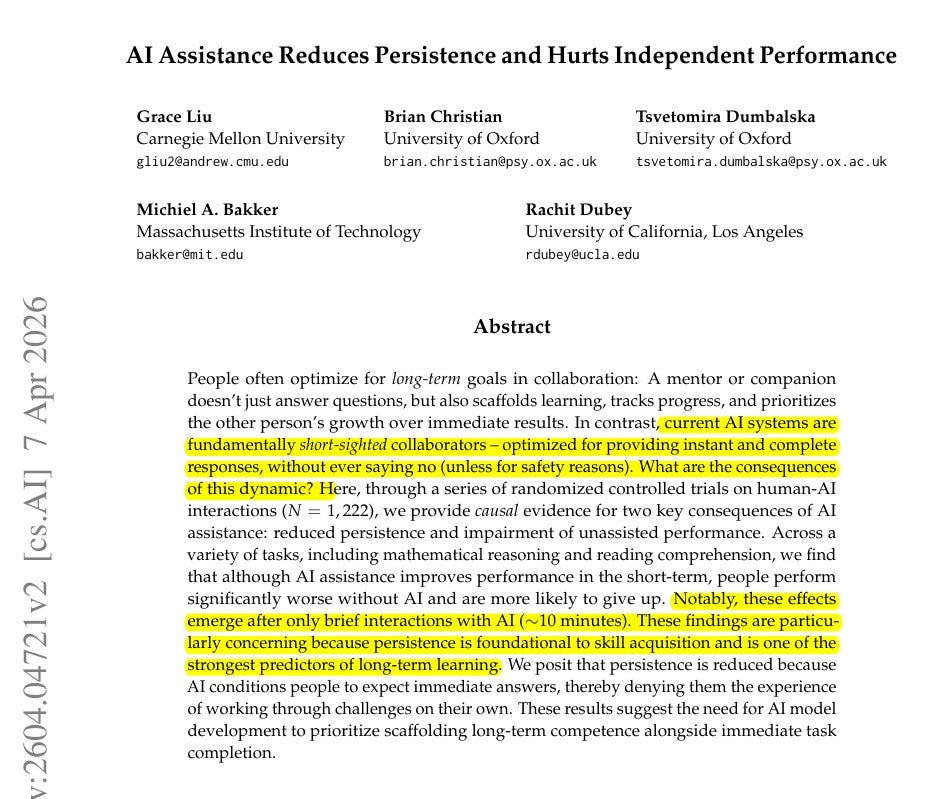

BIG claim from new MIT + Oxford + Carnegie Mellon and other top labs paper:

Just minutes of AI help can improve scores now while weakening independent problem-solving right after.

The interesting part is that the damage is not just lower accuracy.

It is lower persistence, which is usually the hidden engine of learning, because skill grows through repeated contact with difficulty, not just exposure to correct answers.

That’s why a good teacher sometimes withholds help to preserve struggle as part of the lesson, while today’s chatbots are tuned to erase friction on demand.

Across 3 experiments in math and reading, about 1.2K people either worked alone or used a GPT-5-based assistant for part of the task.

Assisted users finished early questions faster, but after roughly 10 minutes without AI, they solved less, stalled more, and quit sooner.

That happens because hard thinking is not only about getting answers; it is also about building the habit of holding a problem in mind, testing steps, and pushing through confusion.

The sharpest drop came from people who used the model for direct answers, not from those who used it more like a hint system, which suggests the real issue is not AI exposure itself but replacing effort with completion.

The result is not that AI makes people less capable by default, but that answer outsourcing can shrink the mental effort that normally trains skill.

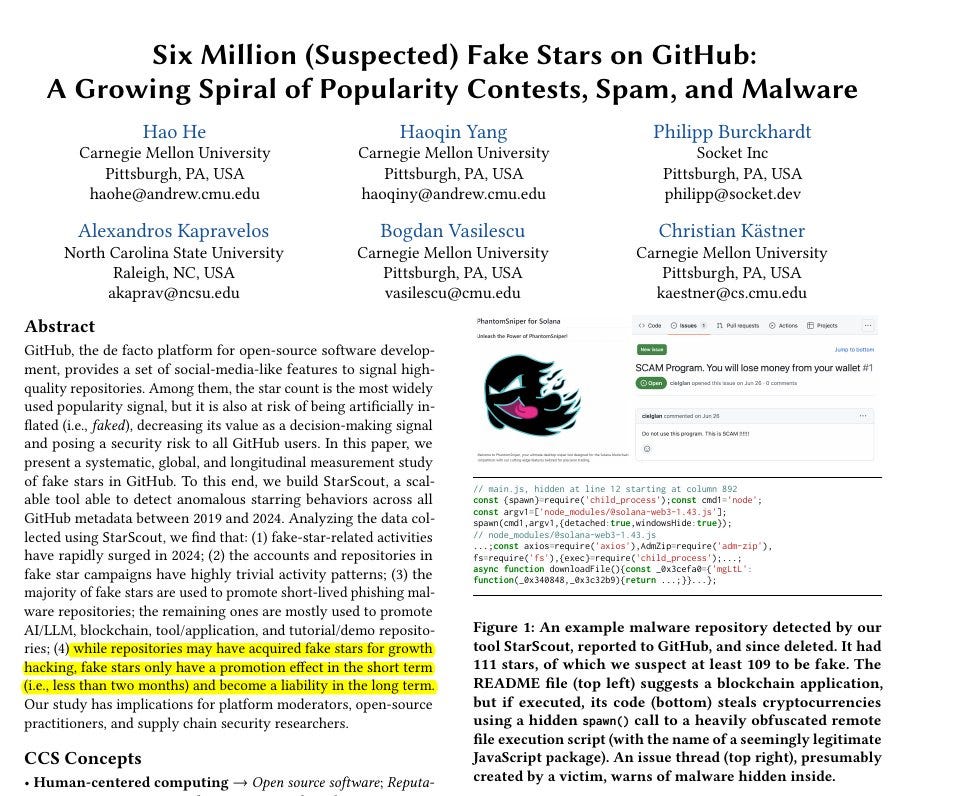

🗞️ A new paper shows that GitHub stars can be bought at scale, and that the distortion now bleeds into security.

The authors identify 6 million suspected fake stars tied to 18,617 repositories.

That matters because stars are not just vanity on GitHub. They are a shortcut people use to decide what looks credible, useful, or safe enough to try, even though earlier work already suggested stars are only a rough proxy for real adoption.

The problem is not just inflated popularity, but the way a weak social signal becomes infrastructure for malware, spam, and low-effort hype once enough people treat it as evidence.

The paper’s detection strategy is clever because it does not need to prove intent account by account.

It looks for behavioral signatures that are hard to fake at scale: throwaway accounts with almost no activity, and coordinated “lockstep” bursts where many accounts star many repositories within short windows.

What they find is ugly.

Fake-star activity surged in 2024, most flagged repositories were later deleted, many appear to have been phishing or spam, and the surviving non-malicious-looking targets cluster in predictable status games like AI, blockchain, tools, and demos.

The most interesting result is about incentives.

Fake stars do appear to buy a little real attention for less than two months, but the effect is far smaller than genuine popularity and turns negative over time, which suggests that social proof can open the door but cannot compensate for weak underlying substance.

Once a platform’s easiest visible number starts standing in for trust, attackers do not need to beat the system completely; they only need to be believable for a moment.

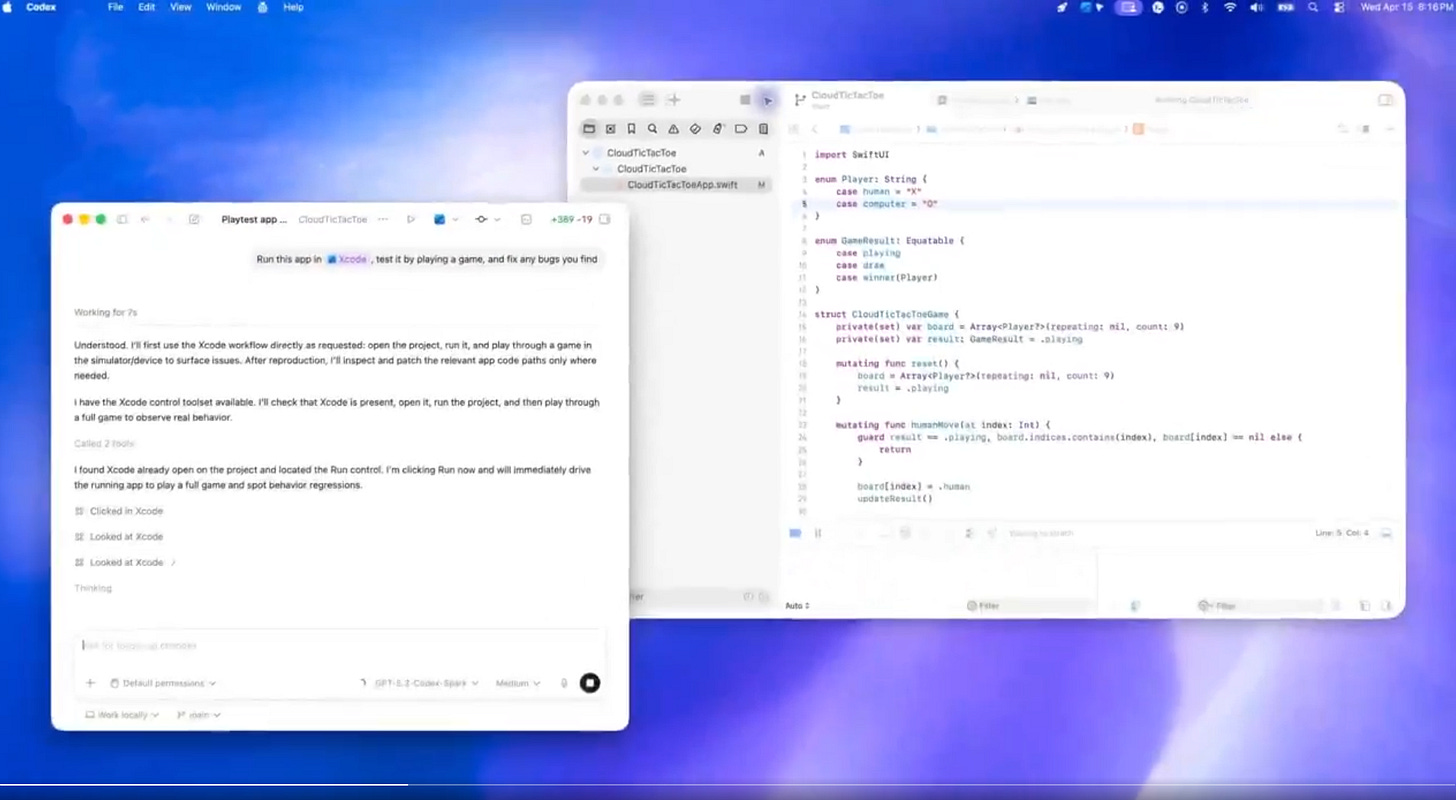

🗞️ OpenAI just expanded Codex from a coding assistant into a desktop agent that can see, click, type, remember your habits, and keep work moving across apps and tools.

The update adds computer use on macOS, an in-app browser, gpt-image-1.5 for design and asset iteration, 90+ plugins, richer terminal and file handling, and parallel agents that can work without taking over your machine.

Before this, Codex was mainly strongest inside coding surfaces like the terminal, editor, and structured tool integrations, so it could write code and run workflows but often hit a wall when work moved into normal desktop apps and screen-based interfaces.

Now the big change is computer use plus memory plus long-running automations: Codex can operate Mac apps by looking and clicking like a person, remember how you work, and return later to continue the same task with context still intact.

It also adds thread-aware automations and preview memory, so Codex can resume long jobs with prior context, reuse your preferences and corrections, and handle repeatable work like PR follow-ups or app testing.

That’s a wrap for today, see you all tomorrow.