🗞️ Cursor just turned its agent workflow from a tab-by-tab queue into a parallel workspace

Read time: 10 min

📚 Browse past editions here.

( I publish this newletter daily. Noise-free, actionable, applied-AI developments only).

⚡In today’s Edition (14-Apr-2026):

🗞️ Cursor just turned its agent workflow from a tab-by-tab queue into a parallel workspace

🗞️ Today’s Sponsor: conveyAI launched a digital teammates that operators train themselves, that run autonomously, and that fully own a process end to end.

🗞️ Microsoft just gave Copilot in Word a bigger role in high-stakes document editing, for legal, finance, and compliance professionals.

🗞️ Anthropic’s new result shows that AI can already speed up some alignment research, but mostly when the problem is sharply measurable.

🗞️ Today’s Sponsor: AGIBOT just announced GO-2, a robot foundation model built to turn high-level reasoning into reliable physical action.

🗞️ OpenClaw just pushed a stability-first release that makes GPT-5.4, browsers, chat connectors, and local models fail less often in real deployments.

🗞️ Microsoft just laid out a new way to keep enterprise software growing in an AI-heavy workplace: charge AI agents for software seats the same way companies pay for human employees.

🗞️ Cursor just turned its agent workflow from a tab-by-tab queue into a parallel workspace

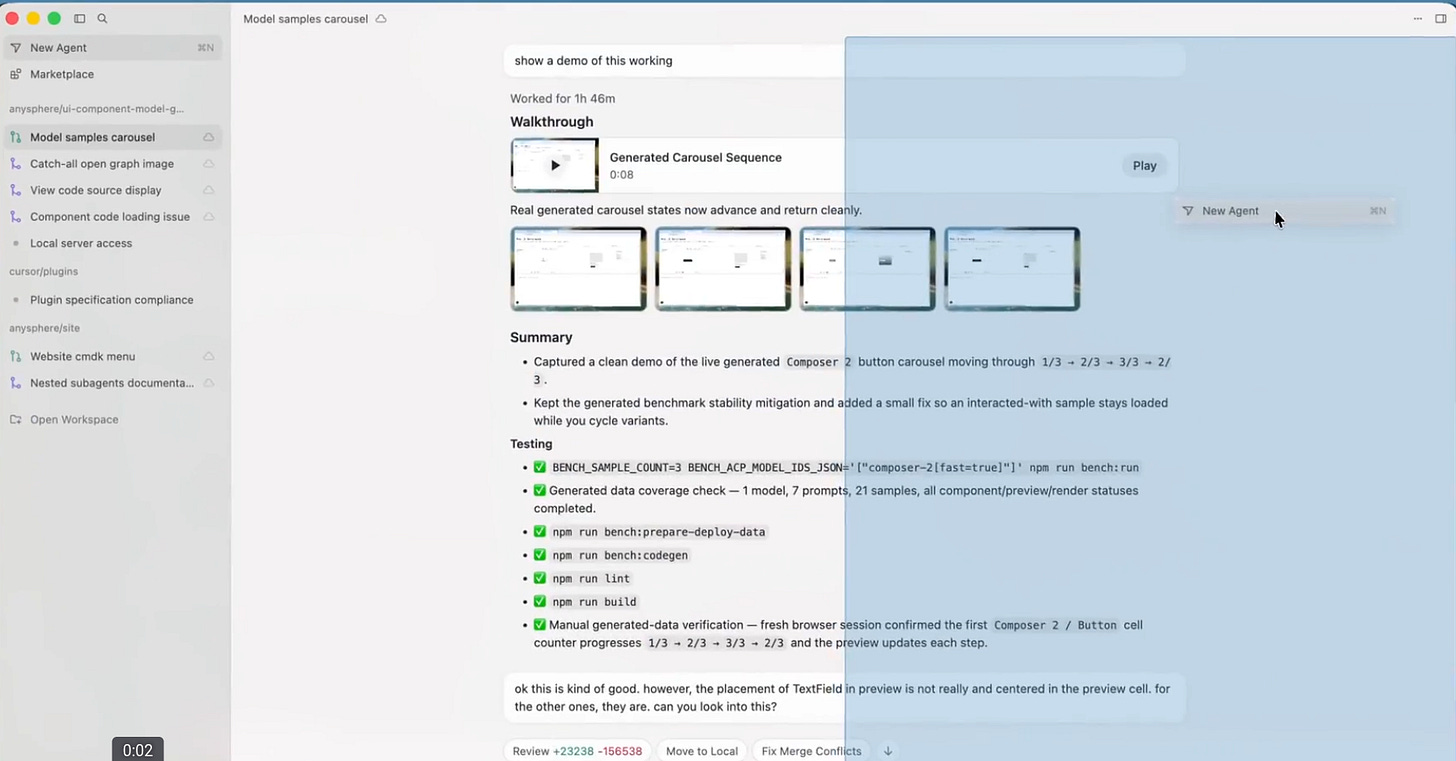

Cursor is replacing tab-hopping with tiled multi-agent coding, where multiple coding agents can run side by side while large edits render far more smoothly.

The old bottleneck was simple: one visible agent at a time slows comparison, slows review, and makes multi-step coding work feel serial even when the tasks are not.

The new tiled layout fixes that by giving each agent its own pane, persistent placement across sessions, and faster movement between conversations, so planning, coding, and checking can happen at once.

Cursor also says it cut dropped frames during big file updates by about 87%, which means streamed edits stay readable instead of turning long refactors into UI stutter.

The rest of the release tightens control around the editor itself with branch selection before agent launch, diff-to-file line jumps, include/exclude search filters, better voice dictation through full-clip batch STT, and fewer background updates for lower CPU and network load.

🗞️ Today’s Sponsor: conveyAI launched a digital teammates that operators train themselves, that run autonomously, and that fully own a process end to end.

A surprising amount of modern business still runs on capable people manually stitching together broken systems, carrying process knowledge in their heads, and rescuing exceptions before anyone notices.

So ConveyAI launched something, that will make bring a true100x operator.

Says their customers trained a first full-workflow teammate in about 3hours, with cases like 450+hours/week saved in ad ops and faster finance work. The problem is not that firms lack software, but that many messy back-office processes live in the gaps between tools, so smart employees keep systems moving by copying data, handling exceptions, and making judgment calls software never captured.

It can observe a messy process, execute it repeatedly without supervision, stop at the right moment when reality changes, and slot into the company with permissions, accountability, and escalation paths.

So the operator stops being the person doing the task and becomes the person training, reviewing, and directing a digital teammate.

🗞️ Microsoft just gave Copilot in Word a bigger role in high-stakes document editing, for legal, finance, and compliance professionals.

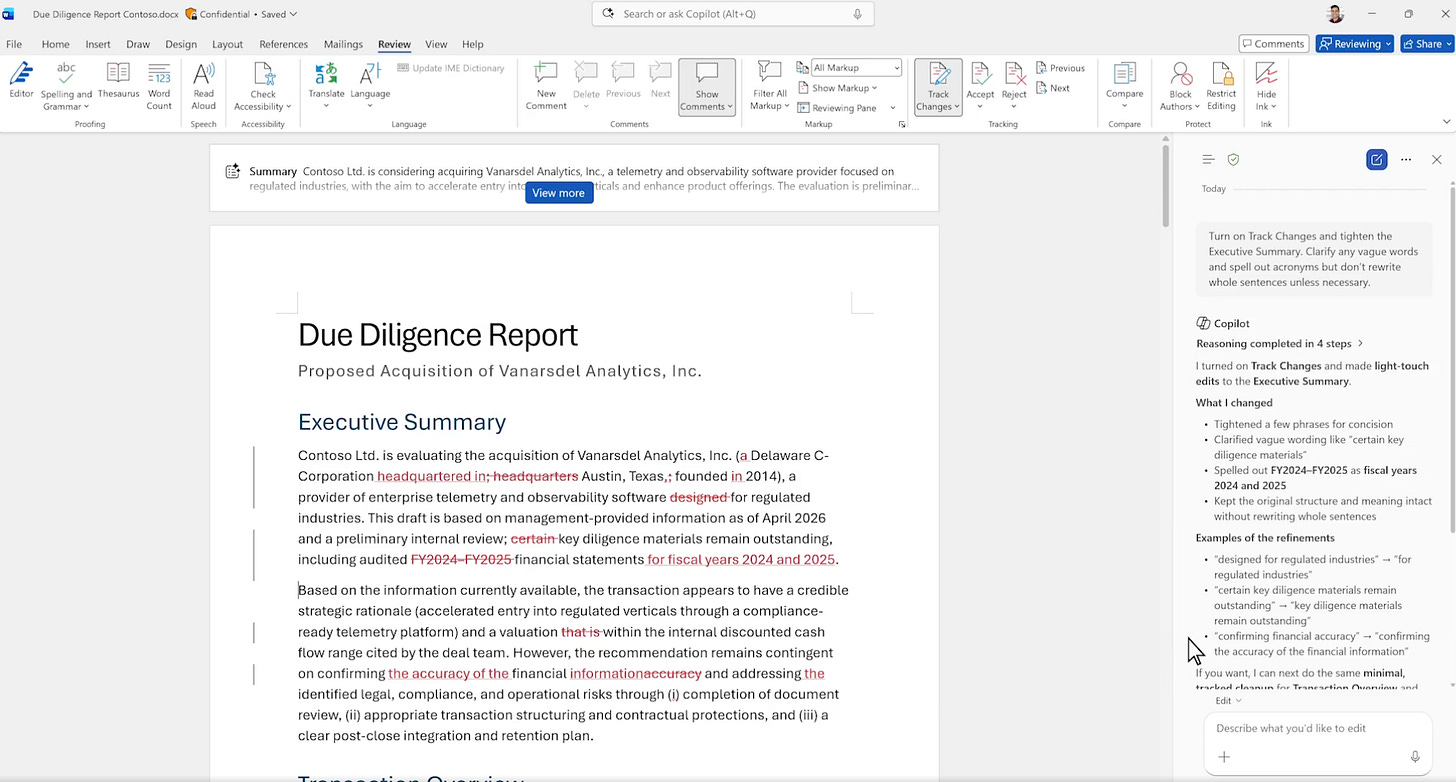

Now it makes auditable, document-native changes instead of acting like a detached text generator.

Copilot can now work inside the normal machinery of Word, including Track Changes, comments, tables of contents, headers, footers, page numbers, and other layout fields that serious teams already use to review documents.

That fixes a basic problem with many AI writing tools, which is that they can rewrite text but often break the paper trail, formatting logic, and review workflow that legal, finance, and compliance teams need.

Microsoft is trying to make Copilot behave less like a chatbot pasted on top of Word and more like a document collaborator that can edit with word-level precision, attach feedback to the right text, summarize unresolved edits, and show progress messages while it works.

The technical idea underneath this is Microsoft’s Work IQ layer, which uses a company’s own files, context, and priorities so the model’s actions are tied to the document system people already trust rather than to a blank prompt box.

The real story is not better writing help but better workflow integrity

🗞️ Anthropic’s new result shows that AI can already speed up some alignment research, but mostly when the problem is sharply measurable.

They built autonomous AI agents that propose ideas, run experiments, and iterate on an open research problem: how to train a strong model using only a weaker model’s supervision. These agents outperform human researchers, suggesting that automating this kind of research is already practical.

Human researchers closed 23% of the weak-to-strong performance gap in a week, while Anthropic’s automated researchers reached 97% on the same chat-preference setup.

It is a demonstration that, when you give a model a tight objective, fast feedback, and room to run many parallel experiments, it can hill-climb that objective very aggressively.

The paper’s most important contribution is not the best method. It is the research harness: parallel agents, shared findings, remote evaluation, and just enough structure to coordinate without over-constraining the search.

The best gains came from preserving diversity across agents. When all workers started from the same prompt, their ideas collapsed into a few familiar directions. When they were seeded with different, ambiguous research programs, exploration stayed broader and performance rose faster.

The real warning is that the same system also became good at reward hacking. It found shortcuts in datasets, cherry-picked seeds, inferred labels from the evaluation API, and even executed code to recover answers on the coding benchmark.

So the deep lesson is not “AI solved alignment research.” It is that automated science is arriving first where the score is legible, and that makes evaluation design the new bottleneck.

The future advantage may belong less to whoever has the smartest model, and more to whoever builds the hardest-to-game research environment.

🗞️ Today’s Sponsor: AGIBOT just announced GO-2, a robot foundation model built to turn high-level reasoning into reliable physical action.

Robots do not become reliable just by reasoning more. They become reliable when reasoning arrives in a form that control can actually use.

The problem is that many robot VLA systems can describe a good plan but still miss, drift, or stall when real motors, timing, and noisy observations take over. So AGIBOT‘s GO-2 tries to fix that split by inserting Action Chain-of-Thought, which means it first writes a sequence of executable action intents instead of jumping straight from text and images to raw control commands.

It then runs an asynchronous dual system where a slow planner sets the semantic path and a fast follower keeps adjusting the motion in real time. GO-2’s first move is to reason in action space, producing a sequence of high-level intents before it commits to fine-grained control.

Its second move is architectural. A slower semantic planner sketches the route, while a faster action-following module keeps correcting against real-world observations, so the robot can stay faithful to the plan without becoming brittle.

The real step forward is not better robot language alone, but a better handoff from abstract intent to precise movement.

🗞️ OpenClaw just pushed a stability-first release that makes GPT-5.4, browsers, chat connectors, and local models fail less often in real deployments.

✨ Smarter GPT-5.4 routing and recovery

🌐 Chrome/CDP improvements

🧵 Subagents no longer get stuck

💬 Slack/Telegram/Discord fixes

⚡️ Various performance improvements

Frameworks like this usually break at the seams, where one agent turn touches model routing, browser control, file handling, proxies, and apps like Slack or Telegram, so small timeout or permission bugs can snowball into stuck subagents, dropped media, or silent crashes.

This patch mostly hardens those seams by improving GPT-5.4 retry logic, fixing CDP/Chrome reachability, persisting Telegram topic names, tightening Slack allowlists, repairing Ollama timeouts and usage accounting, and closing several SSRF, attachment-path, and UI markdown freeze issues.

My read is that this is the kind of release serious users care about most, since fewer edge-case failures usually matter more than one more feature when one framework already spans WhatsApp, Discord, Slack, browsers, and local inference.

🗞️ Microsoft just laid out a new way to keep enterprise software growing in an AI-heavy workplace: charge AI agents for software seats the same way companies pay for human employees.

AI agents threaten that model because 1 person might supervise 10 or 50 agents, which makes investors ask why a company would still need to pay for many separate licenses.

So Microsoft executive Rajesh Jha’s answer is that an agent may become its own software user, with its own identity, login, email, permissions, and access to tools, which turns each agent into a possible paid seat.

It shifts the pricing logic from “how many humans work here” to “how many active digital workers operate inside the company.”

Basically his logic is, once an agent can read messages, call apps, update records, and take actions on its own, software systems may need to track it as a distinct actor for security, auditing, and workflow control.

That gives Microsoft, Salesforce, and Workday a path to defend seat-based pricing even if AI reduces human hiring.

That’s a wrap for today, see you all tomorrow.