Ex-Twitter CEO’s firm Block to replace almost 50% workforce with AI

AI crosses new thresholds: Martian launches its massive coding benchmark, Google advances image creation with Nano Banana 2, and Anthropic makes a principled stand against the Pentagon.

Read time: 9 min

📚 Browse past editions here.

( I publish this newletter daily. Noise-free, actionable, applied-AI developments only).

⚡In today’s Edition (27-Feb-2026):

🗞️ Ex-Twitter CEO’s firm Block to replace almost 50% workforce with AI

🗞️ Martian open-sourced the largest coding benchmark ever to evaluate how well AI agents review your daily code

🗞️ AI has started crossing a key threshold. We better get ready.

🗞️Google has a massive lead in image creation and editing.

🗞️ Anthropic stood their ground and told the United States Department of Defense a strong “NO”

🗞️ Ex-Twitter CEO’s firm Block to replace almost 50% workforce with AI

Most people are not ready for what’s coming in their jobs and careers. Jack Dorsey, the co-founders of Twitter and CEO of Block just fired half of his employees (4000) from Block, because AI has made their roles redundant. Says the business is actually doing great, but new AI coding tools allow single engineers to do the work of entire teams.

Block can operate much faster with a significantly smaller group of highly talented engineers. Fired workers will receive 20 weeks of base salary alongside 6 months of healthcare coverage.

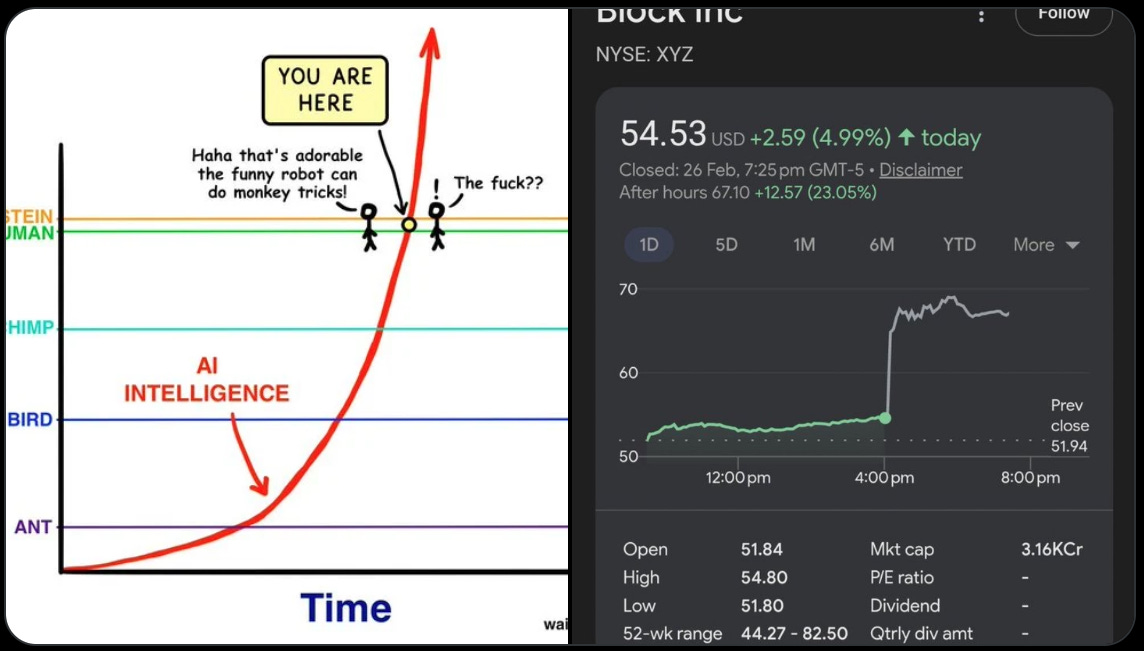

Block expects to spend up to $500mn in restructuring costs, but Wall Street loved the move and sent their stock price up by 24%. A permanent industry shift has started, where software companies will simply refuse to hire large human teams for tasks that algorithms can execute instantly.

The harsh economic reality is that AI represents a fundamental shift in efficiency.

If a country or a massive corporation places strict constraints on layoffs to protect existing jobs, they will eventually lose their competitive edge. The market will always favor the most efficient path.

As we saw with Block. It has soared more than 27% in after-hours trading after its CEO, Jack Dorsey announced it was laying off more than 4,000 of its 10,000 some employees due to efficiency gains from

🗞️ Martian open-sourced the largest coding benchmark ever to evaluate how well AI agents review your daily code

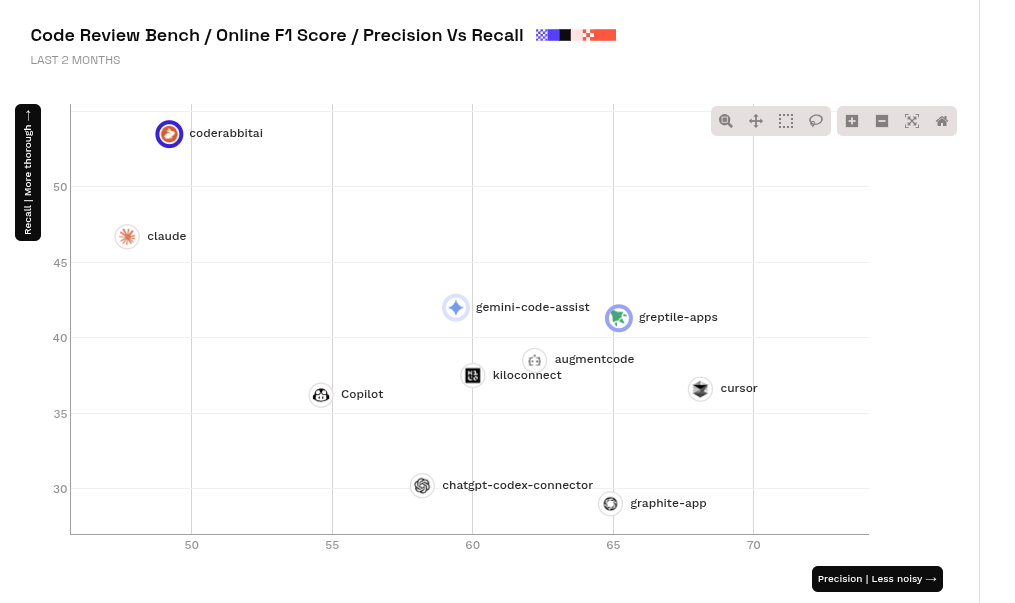

This is the first independent code review benchmark. 200,000+ PRs. Unbiased. Fully OSS. Updated daily. And this is the official Github.

The fundamental problem with testing AI coding agents right now is training data leakage. Companies evaluate their new tools on static datasets of old code bugs. The language models powering these tools have usually already seen those exact bugs during their training phase.

This setup creates artificially high scores that do not reflect how the bot will perform on entirely new code. e.g. OpenAI deprecated the super popular SWE-bench Verified dataset due to model memorization and data contamination.

Martian fixes this flaw by creating a living benchmark that measures exactly how well AI tools review code.

The framework analyzes over 200,000 pull requests to see if tools actually catch bugs or just generate useless noise. It operates using a dual system consisting of an offline component and an online component.

The offline system uses a fixed dataset of 50 manually verified pull requests from major open-source projects like Sentry and Grafana. Human experts label the true underlying issues in these specific repositories to create a baseline of golden comments.

A judge model then reads the AI tool’s review and compares it against those human-verified golden comments. The judge calculates a precision score to see how many of the bot’s comments were actually useful.

It also calculates a recall score to reveal what percentage of the actual real-world bugs the bot successfully caught. The online system operates continuously to completely eliminate any chance of data leakage.

It automatically pulls brand new pull requests directly from GitHub shortly after an AI bot leaves a review comment. The judge model analyzes the code changes the human developer actually merged after reading the bot’s feedback.

If the human developer implemented the exact fix the bot suggested, the tool earns a point for accuracy. This ensures the benchmark always uses fresh code that no AI model could have possibly seen during training.

Current results show that tools like Augment excel at precision on very large code changes. Open-source solutions like KiloCode are also performing exceptionally well across multiple different scoring metrics.

Code Review Bench brings much-needed transparency to an industry where companies previously just graded their own homework. I think this dual-layered approach is exactly what the industry needs to push past the current plateau of easily manipulated static benchmarks.

It forces developers to build tools that genuinely understand code context rather than just regurgitating memorized patterns. Check them out here. And here’s the official announcement video.

🗞️ AI has started crossing a key threshold. We better get ready.

So many massively important and literally tectonic shifts happened over the last seven to ten days.

A single Anthropic blog post on COBOL coding can cause IBM loose more than $31B in a single day.

After Claude Cowork automation plugins launch, Wall Street had a sudden, terrifying realization: traditional software companies make their money by charging “per seat” (per human user). If AI agents start doing the work of multiple humans, companies won’t need to buy as many software licenses. This panic triggered a massive selloff, wiping out roughly $285 billion in market value across global software stocks in a single day

Jack Dorsey can slash 50% of a $31B company on a single day becaue of AI efficiency.

A single viral Substack post from Citrini Research (painting a dystopian “Global Intelligence Crisis” with AI causing 10%+ unemployment, white-collar bloodbath, and a 38% S&P 500 crash by 2028) wipes billions off Visa, Mastercard, American Express and other payment giants in one trading session — while slamming the Dow Jones more than 800 points in a single day. Wall Street went into full panic mode over the AI “doomsday scenario” becoming real.

GPT-5.2 Pro Solves a Decade-Old Quantum Physics Mystery in 12 Hours, this month (mid-Feb). GPT-5.2 Pro) was tasked with a theoretical physics puzzle regarding “gluon interactions” that had baffled human physicists for over ten years. In just 12 hours, the AI developed a completely new, mathematically sound proof showing that these interactions—previously assumed by scientists to be impossible under certain conditions—actually occur. The proof was so flawlessly executed that it was independently verified by researchers at Harvard and Cambridge.

AI Discovers 25 New Materials to Upend the Global EV Supply Chain On February 19, researchers announced that an AI system had autonomously mapped over 67,000 magnetic compounds and successfully discovered 25 entirely new materials that stay magnetic at high temperatures.

Why is this earth-shattering?

It creates a realistic path to break the world’s reliance on expensive rare-earth metals for electric vehicles, smartphones, and wind turbines. AI just bypassed decades of slow, traditional trial-and-error lab testing to instantly rewrite the multi-billion-dollar clean energy supply chain.

Amazon axes 16,000 corporate jobs in one announcement (January 2026), explicitly tying it to AI “agentic” workflows and efficiency overhauls. This helped fuel the highest monthly U.S. job-cut total since 2009 (108,435 announced), with AI now openly blamed for thousands of them — and CEO Sam Altman calling out companies for “AI-washing” their layoff lists.

A little-known startup (Altruist Corp.) drops one new AI tax-strategy tool and instantly torches wealth-management giants: Charles Schwab, Raymond James, and LPL Financial all plunge 7%+ in a single day — their steepest drops since the 2018 trade-war meltdown — erasing billions in market value as investors freak out that AI is about to eat their entire business model.

Goldman Sachs issues a bombshell warning that accelerating AI adoption is already causing 5,000–10,000 net job losses per month in exposed sectors and could actually raise the U.S. unemployment rate in 2026. The report itself triggered fresh market jitters and reinforced the “AI is coming for white-collar jobs faster than anyone expected” narrative.

🗞️Google has a massive lead in image creation and editing with its newly launched Nano Banana 2

Newly dropped Nano Banana 2 is just plain impressive.

They already owned the top spot with Nano Banana 1, and now they have managed to break their own record. The ability to finally maintain high resemblance is a breakthrough for image models, and Google calls this subject consistency.

The core of this update relies on the Gemini 3.1 Flash Image architecture. This setup allows the model to actively pull real-time information from web searches while it builds your image.

Connecting to the web instantly helps the system understand exactly what specific things should look like. Another massive improvement is how well this model handles actual text inside the pictures.

You can now generate marketing materials where the spelled words are completely legible and accurate. The system can even translate the text inside the generated image into different languages automatically.

The model can keep the exact same look for up to 5 different characters and 14 distinct objects across multiple images in a single workflow. You get total control over the size of your creations, supporting resolutions from 512 pixels up to 4K.

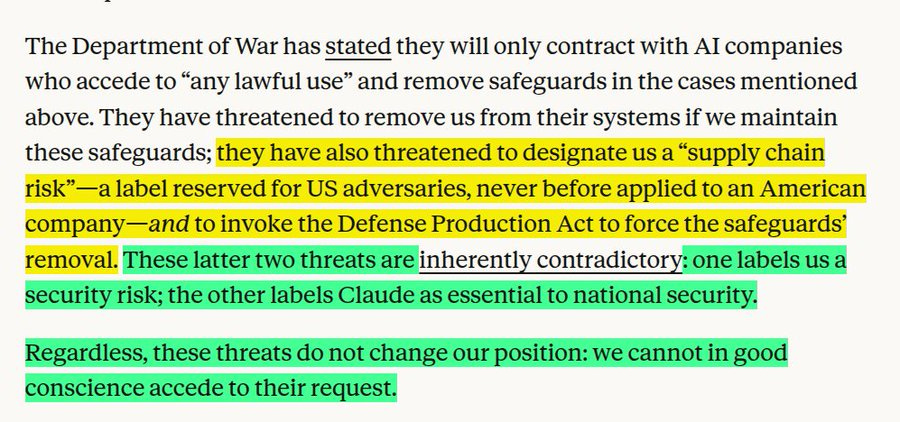

🗞️ Anthropic stood their ground and told the United States Department of Defense a strong “NO”

This means the government wanted a blank check to use the technology without any of the company’s ethical rules getting in the way. Anthropic pushed back and rejected this demand entirely because of 2 extremely solid red lines that they refuse to cross.

The first issue is about mass domestic surveillance, which means using AI to sweep up massive amounts of data to spy on everyday citizens on a giant scale. Anthropic argues that current laws are way behind the technology, and letting AI automatically build a complete picture of a person’s life goes against basic democratic freedoms.

The second issue is about fully autonomous weapons, which are military systems that can pick a target and launch an attack completely on their own without a human pulling the trigger. Anthropic strongly believes that even the best AI systems today are simply not reliable enough to make life-or-death decisions on the battlefield.

They warned that putting this unreliable software in charge of lethal weapons would put both troops and innocent civilians at massive risk. Basically, the military argued that they should be the ones to decide what is legal and safe during high-pressure missions, not a private tech company.

But Anthropic stood its ground, stating that while they want to help defend the country, handing over a system that could easily make a lethal mistake or spy on innocent people is something they simply can not do in good conscience. To note, the Pentagon’s requirement that AI models be offered for “all lawful purposes” in classified settings is not unique to Anthropic. While Anthropic has been the only model used in classified settings to date, xAI recently signed a contract under the all lawful purposes standard for classified work.

That’s a wrap for today, see you all tomorrow.