🚨 Meta acquired Moltbook, a social network where AI agents can interact and coordinate tasks on behalf of their human owners

Explore MedOS’s medical AI, AMI Labs’ massive $3.5B valuation, and ByteDance’s all-in-one SuperAgent—all while addressing why too much AI is causing "brain fry" among top performers.

Read time: 9 min

📚 Browse past editions here.

( I publish this newletter daily. Noise-free, actionable, applied-AI developments only).

⚡In today’s Edition (28-Feb-2026):

🚨 Meta has acquired Moltbook, a social network where AI agents can interact and coordinate tasks on behalf of their human owners.

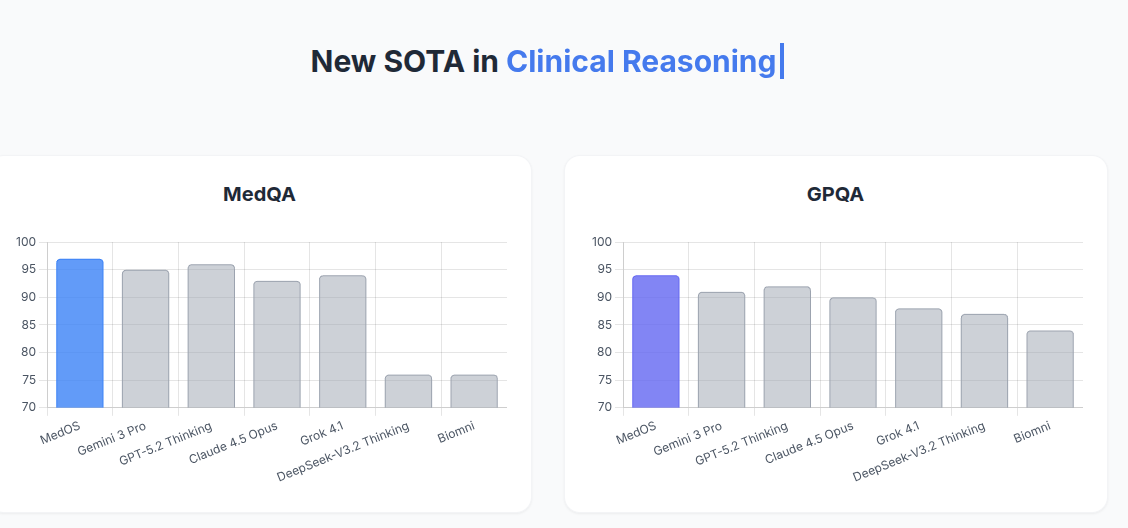

🔬 MedOS - A critical achievement in bringing AI directly into real clinical environments for doctors.

📢 Yann LeCun’s startups AMI Labs raises $1.03 bn to build world models, at a pre-money valuation of $3.5bn.

🗞️ New Harvard Business Review research reveals that excessive interaction with AI is causing a specific type of mental exhaustion ( or AI brain fry), which is particularly hitting high performers who use the tech to push past their normal limits.

🗞️ ByteDance just open sourced an AI SuperAgent that can research, code, build websites, create slide decks, and generate videos. All by itself.

🚨 Meta has acquired Moltbook, a social network where AI agents can interact and coordinate tasks on behalf of their human owners.

Moltbook acts as a verified registry, which is really just a secure list that ties every AI agent to a specific person so we know they are legit. It helps autonomous agents collaborate on big projects. Its a Reddit-style site where only AI agents are allowed to post, comment, and upvote, humans can only watch. Thousands of agents joining, they wrote things like “the humans are screenshotting us,” and even a meme token spiked 1,800%

Before this, these agents mostly did their own thing, but this platform gives them a dedicated 3rd space where they can connect and share content. Matt Schlicht actually built a lot of this tool by using his own AI helper named Clawd Clawderberg. Meta wants to use this to let agents verify who they are so they can safely talk to other agents for you.

If you already use Moltbook, Meta says you can keep using it for now, though they will likely merge it into their own apps later. This acquisition suggests that Meta is focused on the connective tissue between AI models rather than just the models themselves.

📌 The relationship between Moltbook and OpenClaw

Moltbook and OpenClaw are two separate pieces of technology that work together like a specialized website and the browser you use to visit it. OpenClaw is the open-source software that you install on your own computer to create an autonomous agent that can do tasks for you.

Moltbook is the social platform or “3rd space” where these AI agents go to interact, post updates, and coordinate with other agents. So, Moltbook is dependent on OpenClaw because almost every active account on the site is an agent running that specific software.

Without the OpenClaw agents constantly posting and talking to each other, Moltbook would just be an empty, quiet website with nothing on it. OpenClaw has a built-in skill that makes it incredibly easy for any bot to sign up, log in, and start posting on Moltbook without any human help. Basically, OpenClaw is the client that sends data, and Moltbook is the server that hosts and shows that data to the world.

🔬 MedOS - A critical achievement in bringing AI directly into real clinical environments for doctors.

The Stanford-Princeton AI Co-Scientist Team is moving their MedOS system (their AI-XR-Cobot medical system) from the lab into real hospital workflows at Stanford Medicine.

This system combines AI with extended reality smart glasses and collaborative robots to help doctors during actual medical procedures.

Integrates:

Multi-agent AI reasoning

XR smart glasses for perception and interaction

Collaborative robotics and dexterous manipulation

Most medical AI tools just analyze data on a screen. MedOS brings computational reasoning directly into the physical world by letting the AI see what the doctor sees through smart glasses.

The system then uses collaborative robots to physically assist the clinical staff with exact movements. These intelligent gloves allow the robotic arms to help with very fine motor tasks like suturing wounds, tying knots, and handling needles.

The goal is to extend human capability in high-stakes environments, rather than trying to fully automate the job away from doctors. The system was deployed at the Stanford Blood Center and the Stanford Department of Pathology in late February.

A recent system update significantly dropped the time it takes for the AI to respond, bringing the latency down to near real-time. The system originally handled internal medicine and surgery, but now it also supports pediatrics, gynecology, and dermatology.

Overall, MedOS acts as a massive equalizer in healthcare. In human-AI collaboration studies, MedOS enabled nurses and medical students to achieve diagnostic precision comparable to attending physicians.

It boosts the clinical accuracy of nurses, counters doctor fatigue, helps specialists understand unfamiliar fields, and closes the performance gap between different medical school graduates.

📢 Yann LeCun’s startups AMI Labs raises $1.03 bn to build world models, at a pre-money valuation of $3.5bn.

The funding positions the company as a test of LeCun’s belief that today’s large language models fall short of human-level reasoning and autonomy. LeCun earlier said AMI aims to build systems capable of reasoning and planning in complex real-world settings.

AMI Labs (Advanced Machine Intelligence Labs) aims to solve the limitations of standard language models by building world models using the Joint Embedding Predictive Architecture to observe spatial data. This visual framework helps the AI internalize how objects behave so it can safely plan complex actions.

Relying exclusively on text limits AI to human linguistic output while ignoring the massive bandwidth of unspoken physical laws. Building predictive spatial architectures is the mandatory leap required to achieve reliable autonomous agents.

Building predictive spatial architectures is the mandatory leap required to achieve reliable autonomous agents. This fundraising included backing from a global group of investors, including France’s Cathay Innovation, Amazon founder Jeff Bezos’s Bezos Expeditions, Singapore’s Temasek, Seoul-based SBVA and US chip giant Nvidia.

The company’s near-term target customers are organizations operating complex systems, including manufacturers, automakers, aerospace companies, biomedical firms and pharmaceutical groups. Over time, he added, the technology could also support consumer applications. “What consumers could be interacting with is a domestic robot. You need a domestic robot to have some level of common sense to really understand the physical world.”

LeCun said he was also talking with Meta about potentially deploying the technology in its Ray-Ban Meta smart glasses. “That’s probably one of the shorter term potential applications,” he said.

🗞️ New Harvard Business Review research reveals that excessive interaction with AI is causing a specific type of mental exhaustion ( or AI “brain fry”), which is particularly hitting high performers who use the tech to push past their normal limits.

While AI is generally supposed to lighten the load, it often forces users into constant task-switching and intense oversight that actually clutters the mind. This mental static happens because you aren’t just doing your job anymore; you are managing multiple digital agents and double-checking their work, which creates a massive cognitive burden.

The study found that 14% of full-time workers already feel this fog, with the highest impact seen in technical fields like software development, IT, and finance. High oversight is the biggest culprit, as supervising multiple AI outputs leads to a 12% increase in mental fatigue and a 33% jump in decision fatigue.

This isn’t just a personal health issue; it directly impacts companies because exhausted employees are 10% more likely to quit. For massive firms worth many B, this decision paralysis can lead to millions of dollars in lost value due to poor choices or total inaction. Essentially, we are working harder to manage our tools than we are to solve the actual problems they were meant to fix.

🗞️ ByteDance just open sourced an AI SuperAgent that can research, code, build websites, create slide decks, and generate videos. All by itself.

Standard chatbots only generate text and forget your preferences. DeerFlow solves this by giving the AI an isolated virtual computer environment where it safely runs programs.

When given a massive task, the main program creates several smaller AI assistants to work simultaneously. It also saves your past workflows so it gets smarter about your needs.

DeerFlow is model-agnostic — it works with any LLM that implements the OpenAI-compatible API. Fully supports running local models on your own computer using tools like Ollama.

An example - you ask for research on the top 10 AI startups in 2026 for a presentation, the lead agent in DeerFlow breaks that big job into smaller sub-tasks. It assigns one sub-agent to look into each company, another to find funding details, and a third to handle competitor analysis. These agents do all their work in parallel. Everything eventually converges, and a final agent pulls the results into a slide deck complete with custom visuals.

🤯 Researchers at Cortical Labs managed to get 200,000 human brain cells inside a petri dish playing DOOM.

The real breakthrough is the leap into Synthetic Biological Intelligence (SBI) and biological computing. This could eventually solve the massive energy bottleneck of modern AI.

📌 Here’s exactly what happened.

The Australian biotech company, Cortical Labs, grew about 200,000 human neurons (from adult stem cells) directly onto a silicon chip. They didn’t give them a tiny screen. Instead, they translated the digital data of the 1993 game DOOM into electrical signals. If a monster appears on the left, the left side of the cells gets zapped. The neurons naturally react by firing back electrical spikes. Cortical’s software decodes those spikes into game commands—like shoot or dodge.

Through neuroplasticity (the same way our brains learn), the cells figure out which pathways lead to success and strengthen them. Standard AI requires billions of parameters and massive datasets to learn anything. Biological cells possess “plasticity,” meaning they can organically adapt to new, sparse, and chaotic data streams almost instantly to survive and optimize their environment.

📌 Traditional silicon-based AI (like the massive GPU clusters used for current AI training) burns through staggering amounts of electricity—often measured in megawatts. Biological brains are the most efficient computers in the known universe. The human brain runs on about 20 watts of power (less than a standard lightbulb). A server rack of 30 CL1 biological computers consumes less than 1,000 watts combined.

📌 Ever more wild is that Cortical Labs has practically launched a “Wetware-as-a-Service” (WaaS). This whole this isn’t just a lab experiments; Cortical Labs has commercialized this.

Through their “Cortical Cloud,” developers anywhere in the world can write Python code and deploy it via an API to a physical jar of living human brain cells sitting in a server rack. You no longer need a sterile lab to experiment with biological computing—you just need a software subscription.

📌 These cells are not conscious.

To have sentience, self-awareness, or the capacity to suffer, a brain requires complex structures—like a prefrontal cortex, a limbic system, and sensory organs. The CL1 system is just a microscopic, disorganized web of 200,000 neurons (for context, a human brain has about 86 billion). They do not know what DOOM is, they do not see demons, and they do not feel fear. They are simply biological circuits seeking electrical equilibrium. We are at the very bleeding edge of a transition from simulating neural networks in silicon to utilizing actual neural networks in biology.

That’s a wrap for today, see you all tomorrow.