🧮 OpenAI started rolling out sponsored ads inside ChatGPT for users in the U.S. on the Free and Go tiers.

Sponsored ads hit ChatGPT, AGIBOTs host a gala, law firms cut jobs over AI, Stanford flags LLM reasoning gaps, and Alibaba drops a multimodal image model.

Read time: 11 min

📚 Browse past editions here.

( I publish this newletter daily. Noise-free, actionable, applied-AI developments only).

⚡In today’s Edition (11-Feb-2026):

🧮 OpenAI started rolling out sponsored ads inside ChatGPT for a small group of logged-in adult users in the U.S. on the Free and Go tiers.

🤖 Today’s Sponsor: The world’s first all-robot gala, AGIBOT NIGHT, made a stunning debut.

🏆 Baker McKenzie, a very large international commercial law firm, is laying off a little more than 700 people, or 10% of its total staff, driven in part by the growing use of AI.

📡 A viral new study from Harvard Business Review on AI’s effect on office work

🛠️ New Stanford+California Institute of Technology paper propose a taxonomy for understanding the mismatch between great benchmark scores and real reasoning weaknesses in LLMs.

👨🔧 Alibaba launched Qwen-Image-2.0, a single model that handles both text-to-image generation and image editing.

🧮 OpenAI started rolling out sponsored ads inside ChatGPT for a small group of logged-in adult users in the U.S. on the Free and Go tiers.

Under the hood, the system picks an ad by matching an advertiser’s submission to signals like the topic of the current chat, past chats, and past ad interactions, then shows the most relevant eligible option first.

Advertisers strictly do not get access to chats, chat history, memories, or personal details, and instead only see aggregate reporting like views and clicks. Free users can avoid ads by upgrading, or by turning ads off in exchange for fewer daily free messages, and there are controls to dismiss ads, manage personalization, and delete ad data.

🤖 Today’s Sponsor: The world’s first all-robot gala, AGIBOT NIGHT, made a stunning debut.

Hundreds of AGIBOT robots delivered a full-stack showcase of “perception–decision–execution,” spanning dance, martial arts, and magic.

Technology, now with warmth and a soul.

🏆 Baker McKenzie, a very large international commercial law firm, is laying off a little more than 700 people, or 10% of its total staff, driven in part by the growing use of AI.

For years, AI job-loss talk in big law mostly centered on fee-earning lawyers rather than support teams. Here, the reported scope spans core staff functions like IT, knowledge, admin, DEI, learning, secretarial, marketing, and design.

📡 A viral new study from Harvard Business Review on AI’s effect on office work

Task expansion happened because AI filled in gaps in knowledge, so people started doing work that used to belong to other roles or would have been outsourced or deferred. That shift created extra coordination and review work for specialists, including fixing AI-assisted drafts and coaching colleagues whose work was only partly correct or complete.

Boundaries blurred because starting became as easy as writing a prompt, so work slipped into lunch, meetings, and the minutes right before stepping away. Multitasking rose because people ran multiple AI threads at once and kept checking outputs, which increased attention switching and mental load. Over time, this faster rhythm raised expectations for speed through what became visible and normal, even without explicit pressure from managers.

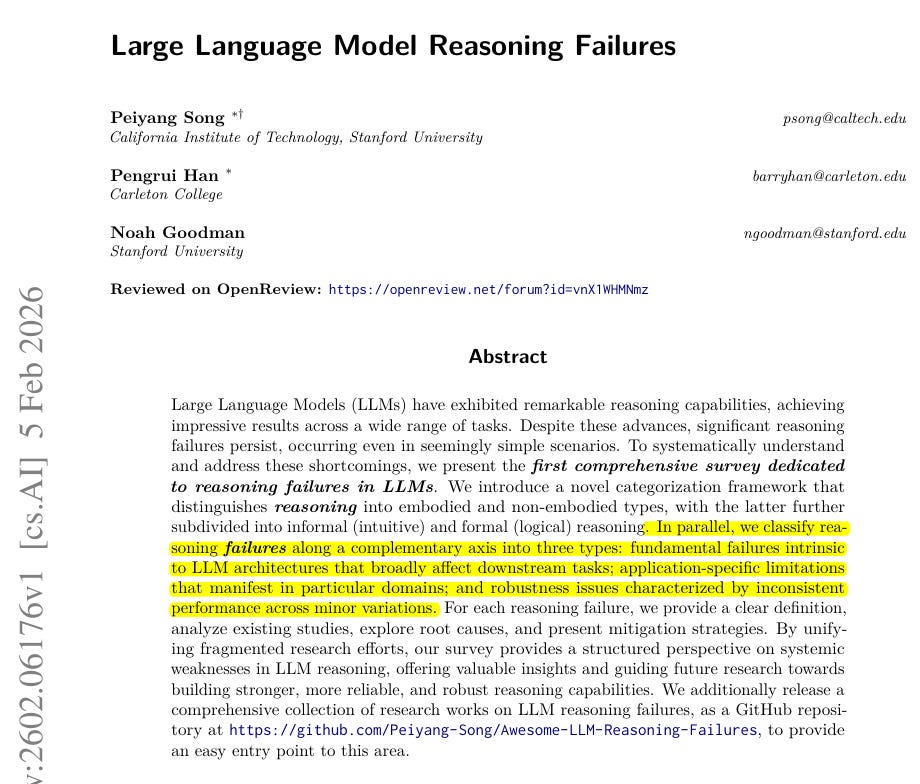

🛠️ New Stanford paper propose a taxonomy for understanding the mismatch between great benchmark scores and real reasoning weaknesses in LLMs.

It introduces a 2-axis taxonomy that splits reasoning into embodied vs non-embodied, with non-embodied split into informal and formal reasoning.

It also classifies failures as fundamental, application-specific, or robustness issues, so failures can be compared across tasks instead of treated as anecdotes.

Static benchmarks can hide brittleness because semantically equivalent tweaks, like reordering multiple-choice options or renaming code variables, can change the answer.

Fundamental failures are tied to how transformers do next-token prediction, with limited working memory and directional generalization gaps like the reversal curse.

In the reversal curse example, GPT-4 answers “Who is Tom Cruise’s mother?” but fails the reversed question about Mary Lee Pfeiffer’s son.

Application-specific limits show up in domains like social reasoning and planning, where GPT-3.5 answers a false-belief prompt with 95% confidence even when the story makes the belief obviously wrong.

Robustness limits show up when irrelevant details break math word problems or when harmless docstring edits break code generation, even though the underlying task is unchanged.

The survey also flags internally inconsistent rationales, like a physics explanation that says there is no net force while still claiming non-zero acceleration.

Common fixes in the literature include chain-of-thought prompting, retrieval augmentation, training with perturbations, tool integration like simulators, and system-level verification agents.

The taxonomy is useful for evaluators because it encourages measuring stability under controlled perturbations, not just final-answer accuracy.

👨🔧 Alibaba launched Qwen-Image-2.0, a single model that handles both text-to-image generation and image editing.

Alibaba’s Qwen team released Qwen-Image-2.0, combining text-to-image and image editing in 1 model.

It targets dense layouts with long, readable text in 2048x2048 images for PowerPoint (PPT) slides and posters. In AI Arena blind votes, it reports 1029 Elo for text-to-image (#3) and 1034 Elo for editing (#2).

Elo is a relative score updated from head-to-head human preferences. Earlier Qwen releases kept generation and editing as separate models, forcing pipeline switches and inconsistent text behavior.

Qwen-Image-2.0 keeps 1 checkpoint, accepts up to 1,000 tokens, the text chunks it reads, and generates 2048x2048 without tiling. The release describes a Qwen3 vision-language (Qwen3-VL) encoder feeding a diffusion decoder that denoises to pixels.

Access is via Alibaba Cloud BaiLian application programming interface (API) invite testing and a Qwen Chat demo.

That’s a wrap for today, see you all tomorrow.