🗞️ OpenAI sweeps in to ink deal with Pentagon as Anthropic is designated a ‘supply chain risk’

AI Selves go mainstream as agent reliability comes under scrutiny, 93% of U.S. jobs face AI exposure, OpenAI lands a historic $110B round, and Anthropic confronts existential risk after U.S. governmen

Read time: 11 min

📚 Browse past editions here.

( I publish this newletter daily. Noise-free, actionable, applied-AI developments only).

⚡In today’s Edition (28-Feb-2026):

🗞️ OpenAI sweeps in to ink deal with Pentagon as Anthropic is designated a ‘supply chain risk’

🗞️ Today’s Sponsor: Pika launched AI Selves, a tool for building a digital twin that looks eerily like the real you.

🗞️Towards a Science of AI Agent Reliability

🗞️ New study finds, 93% of US jobs and $4.5 trillion in labor value are exposed to AI.

🗞️ OpenAI has secured a record-breaking $110B investment round from SoftBank, Nvidia, and Amazon, at $730B pre-money valuation.

🗞️ Trump in a Truth Social post, Orders Government to Stop Using Anthropic After Pentagon Standoff

🗞️ The “supply chain risk” designation for Anthropic, can theoretically trigger a cascade of other existential crises for Anthropic.

🗞️ The US government’s declaration for Anthropic as a “supply chain risk” could have massive, existential ripple effects on it, and across the entire tech industry.

🗞️ OpenAI sweeps in to ink deal with Pentagon as Anthropic is designated a ‘supply chain risk’

They officially announced, that they reached an agreement with the Department of War for deploying advanced AI systems in classified environments.

Strikes deal with Pentagon hours after Trump admin bans Anthropic. The government labeled Anthropic a supply chain risk because the company demanded strict limits preventing its models from being used for domestic surveillance or fully independent weapon systems.

Curiously, Altman stated their new military contract actually includes those exact same guardrails against autonomous weapons and mass surveillance. To enforce these boundaries, OpenAI is physically sending software engineers to work directly at the Pentagon to build technical safeguards into the deployment.

This split exposes a fundamental architectural difference in how companies build safety into large language models. Anthropic uses Constitutional AI to bake constraints directly into the model during its initial training phase.

The government also wants to fuse vast databases to search for specific patterns among millions of citizens simultaneously. Anthropic categorizes this data fusion as mass surveillance whereas OpenAI can technically reframe the exact same computational process as fraud detection. One of those officials said the relationship between Anthropic and the government had broken down because Anthropic cofounder and CEO Dario Amodei had offended Department of War leadership, including publishing blog posts that “the department got upset about.”

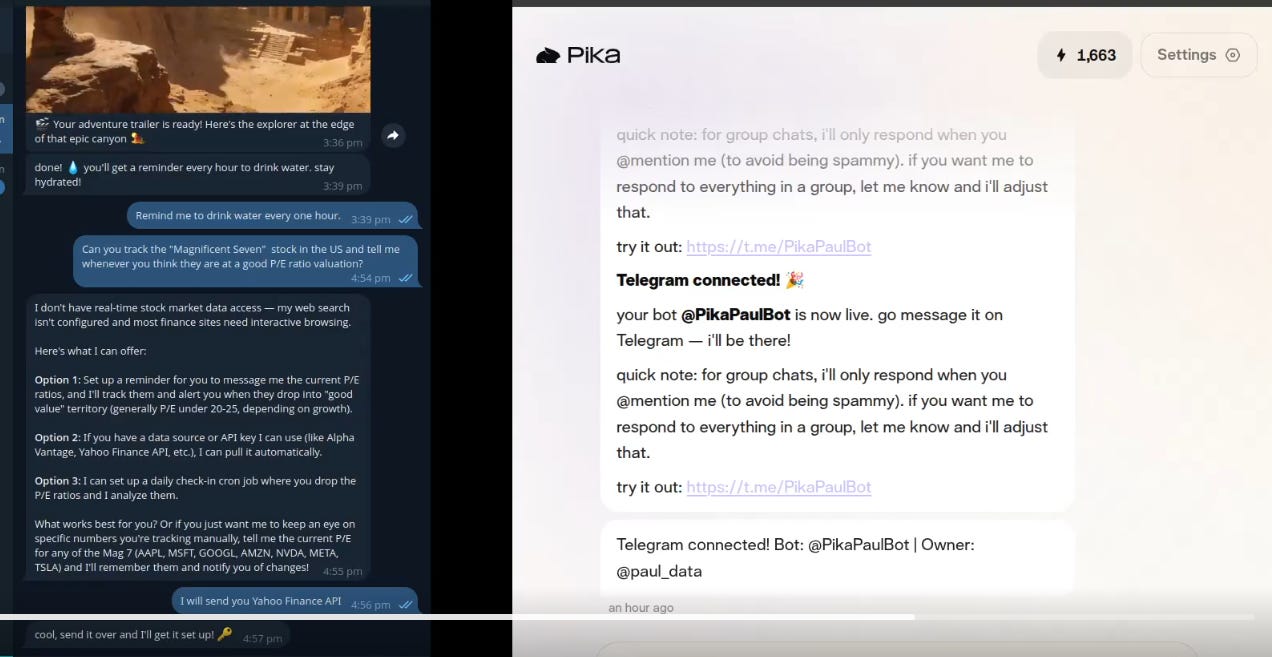

🗞️ Today’s Sponsor: Pika launched AI Selves, a tool for building a digital twin that looks eerily like the real you.

You can set up an AI Self in a few minutes to handle chores, create visuals, or learn your specific routines. Because she has long-term memory, and basically functions as a 24/7 partner that grows alongside you.

I asked her to write about IBM’s stock price disruption from AI fearl, in my writing style. Pika asked to connect to BlueSky via an app password, which I followed, then connected and posted for me, largely preserving my style.

Connects to WhatsApp, Slack, Sheets, and Notion to act on your behalf

Speaks and argues for you when you don’t want to or don’t have time

Replies to personal messages automatically in your tone

Stays synced with your work so you’re always responsive

Sends reminders to build habits like drinking water

Tracks stocks and delivers automatic market updates

Creates images, videos, and creative content in your style

Learns how you think and communicate, then acts like you

You can train your AI Self on a skill that’s uniquely yours by giving Pika your guidelines, references, examples, and feedback so she can perform the work exactly in your style and taste

In the above screenshot, I am letting AI track my stock portfolio for me. You just need to supply an API key for live tracking of any stock portfolio. To do that, I just connected my telegram and then gave my Yahoo-Finance API to Pika, and that is all.

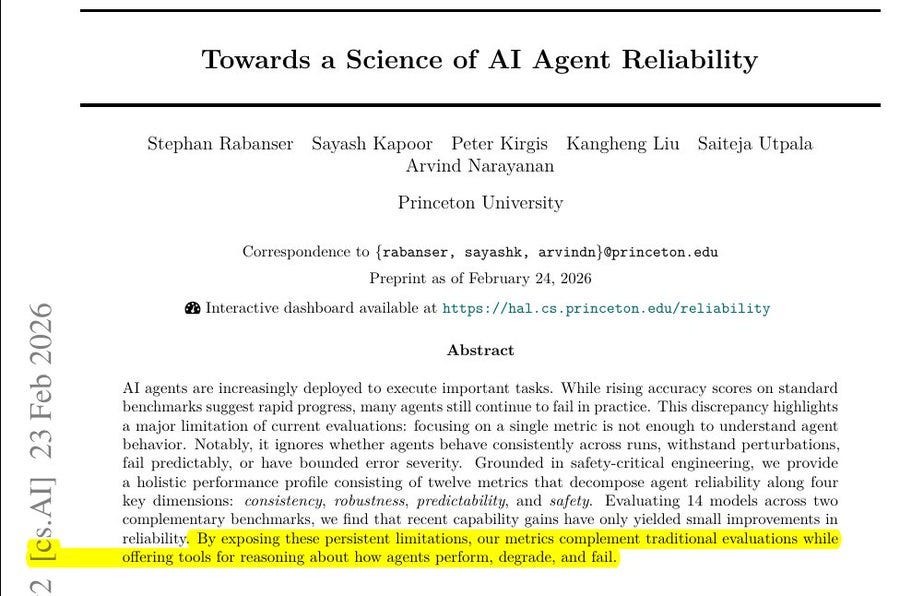

🗞️Towards a Science of AI Agent Reliability

This new Princeton University paper reveals that AI agents are crushing accuracy benchmarks but completely failing at actual dependability. They tested 14 different models across 500 benchmark runs to rigorously measure their performance under pressure.

And proves that these tools are actually way too unpredictable to handle any serious tasks on their own right now. The technology industry currently evaluates LLMs purely on average success rates, completely ignoring whether systems can get the exact same answer twice.

The authors borrowed aviation engineering principles to break true reliability down into consistency, robustness, predictability, and safety. Consistency means the model produces the exact same correct result every single time it tries a task.

Robustness measures if the system survives minor technical glitches or a slight rephrasing of your prompt. Predictability checks if the agent actually knows when it is confused instead of confidently guessing.

Testing proved predictability is overwhelmingly the weakest link across all modern language models. They discovered that simply building larger models does not automatically resolve these massive dependability failures.

🗞️New study finds, 93% of US jobs and $4.5 trillion in labor value are exposed to AI.

The speed of this shift is surprising because breakthroughs in agentic AI, which lets models act like independent assistants to finish multi-step goals, are happening much faster than expected. Multimodal AI is also a major factor, allowing systems to understand text, images, and sound at once to tackle complex professional work.

Financial managers are among the most affected groups, with 84% of their daily tasks now considered exposed to AI assistance or automation. Software development is another high-impact area, with some lead engineers reporting in Jan-26 that 100% of their code is currently written by models like Claude. High exposure doesn’t mean these jobs are going away, but it does suggest that workers will spend much more time overseeing AI rather than doing routine tasks.

🗞️ OpenAI has secured a record-breaking $110B investment round from SoftBank, Nvidia, and Amazon, at $730B pre-money valuation.

SoftBank and Nvidia are each contributing $30B to this round, while Amazon is pledging up to $50B in a multi-stage deal. Amazon will provide $15B immediately, with the remaining $35B becoming available if OpenAI eventually goes public or achieves human-level AI capabilities.

Today’s funding round dwarfs a $30bn deal clinched by Anthropic this year and a $41bn investment into OpenAI in 2025 — at the time the largest start-up investment ever. A significant portion of these partnerships involves physical hardware, specifically using 2 gigawatts of Amazon’s custom-made Trainium chips.

They are also expanding their work with Nvidia to use upcoming Vera Rubin systems, which provide the muscle required for both training new models and running them forBs of users. These massive investments are necessary because OpenAI expects its total costs for training and running models to hit $665B by the year 2030.

ChatGPT has grown to 900 million weekly active users, with 50 million individuals and 9 million businesses now paying for the service. They also reported that Codex, their tool for helping people write software code, now has 1.6 million weekly users.

Microsoft, OpenAI’s largest single shareholder, did not participate in the funding round. OpenAI said its deal with Amazon did not impact Microsoft’s “exclusive license and access to intellectual property across OpenAI models and products.”

Investors and sovereign funds are planning to lock in $10bn of new stock within the next 30 days. This cash sits on top of the $40bn they currently have, helping the company handle losses through 2030. They are aiming to be cash flow positive by that time.

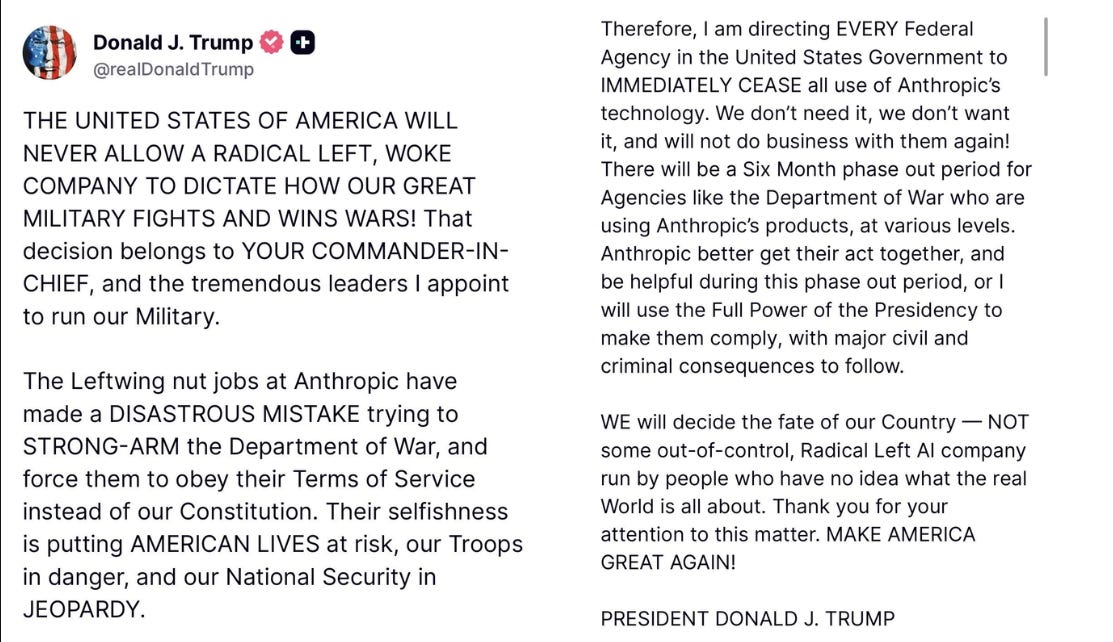

🗞️ President Trump in a Truth Social post, Orders Government to Stop Using Anthropic After Pentagon Standoff

There will be a Six Month phase out period for Agencies like the Department of War who are using Anthropic’s products, at various levels. Anthropic better get their act together, and be helpful during this phase out period, or I will use the Full Power of the Presidency to make them comply, with major civil and criminal consequences to follow.”

🗞️ The “supply chain risk” designation for Anthropic, can theoretically trigger a cascade of other existential crises for Anthropic.

Here’s what can happen to Anthropic now in the worst case.

The government could theoretically invoke the Defense Production Act. This law might allow the federal government to legally compel Anthropic to hand over their technology or remove safety guardrails against their will, effectively seizing operational control of their product.

2. The Enterprise Contagion

The decree states no contractor doing business with the military may conduct commercial activity with Anthropic. This extends far beyond cloud hosting. Massive data integration firms, defense hardware titans, and enterprise software companies holding federal contracts must sever ties.

3. Eviction from Classified Networks

Anthropic previously held a massive competitive advantage with approval to operate on military classified networks. By refusing the Pentagon’s demands, they lose this status. Competitors will immediately fill the vacuum, permanently entrenching themselves in a defense ecosystem Anthropic may never re-enter.

4. The Allied Domino Effect

If the United States designates a company as a severe national security risk, allied nations notice. Intelligence partners across the “Five Eyes” (US, UK, Canada, Australia, New Zealand) and NATO will likely face immense pressure to follow the American lead, freezing Anthropic out of public sector contracts globally.

5. The Capital Squeeze

Training frontier AI requires billions in continuous funding. Investors despise regulatory uncertainty. The prospect of backing a company legally barred from doing business with the federal government and its contractors is terrifying. Hence, this federal siege could severely bottleneck Anthropic’s future funding rounds.

🗞️ The US government’s declaration for Anthropic as a “supply chain risk” could have massive, existential ripple effects on it, and across the entire tech industry.

Defense Secretary Pete Hegseth explicit directive states that

“Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic.”

Because Anthropic does not own data centers, they rely entirely on providers like AWS and Google Cloud to train and run their models.

This new government decree forces those cloud giants into a brutal ultimatum. If forced to choose between multi-billion-dollar defense contracts and hosting a single AI company, these hyperscalers (cloud providers) will undeniably choose to protect their Pentagon ties. It is highly unlikely they will jeopardize their standing in the JWCC just to keep Anthropic online. As per December 2022 official press release by Department of War, the Joint Warfighting Cloud Capability (JWCC)—a massive, multi-billion-dollar initiative awarding cloud computing contracts to Amazon Web Services (AWS), Google Cloud, Microsoft Azure, and Oracle.

Now some possibilities.

1. The Literal Threat is Real, but Unprecedented

If the DoD enforces this decree exactly as written, it acts as a total “secondary boycott.” Historically, the U.S. government uses “supply chain risk” designations for foreign adversaries (like Chinese telecom giant Huawei or Russian software firm Kaspersky). Applying this to a domestic U.S. company valued at $380B is entirely unprecedented.

2. Historically, when a company is deemed a supply chain risk, the law dictates that government contractors cannot use the blacklisted technology in their own internal networks, nor can they resell it to the government. For example, Microsoft and Amazon would be barred from offering Anthropic’s Claude to federal agencies or using Claude to write code for defense projects. However, a traditional blacklist does not usually prevent a contractor from simply selling generic cloud hosting services to the blacklisted entity in a completely separate commercial capacity.

3. A total decoupling of Anthropic from the world’s major cloud providers would face massive legal and logistical hurdles. Banning hyperscalers from simply selling server space to Anthropic would represent a dramatic expansion of federal procurement power.

However, the risk still remains. Unless the Pentagon legally exempts basic server hosting from their definition of “commercial activity,” Anthropic may face an imminent and total infrastructure blackout. On Anthropic’s possible legal defence now.

Anthropic absolutely can seek a Temporary Restraining Order (TRO) to halt this decree, to block enforcement of the decree while challenging its validity. This process begins with filing under the Administrative Procedure Act claiming the designation as a supply chain risk is arbitrary and capricious since those rules historically target foreign adversaries not domestic firms like Anthropic.

Courts would likely find strong grounds for interim relief given the unprecedented scope applied to a U.S. company valued at hundreds of billions without prior notice or hearing violating basic due process under the Fifth Amendment. The existential threat from losing access to major cloud providers like AWS and Google Cloud constitutes clear irreparable harm satisfying a core requirement for such orders as training and operations would halt abruptly.

Additionally the balance of equities tilts toward Anthropic because a short pause during litigation poses minimal national security risk compared to potentially destroying a key American AI innovator while other compliant providers remain available. However, Anthropic winning is not a guaranteed slam dunk. Federal judges traditionally grant immense, almost total deference to the Executive Branch when a policy is framed as a matter of “national security.” If the Department of Defense successfully argues that Anthropic’s restrictive terms of service actively endanger military readiness or troop safety, a judge might hesitate to intervene and second-guess the Pentagon.

That’s a wrap for today, see you all tomorrow.