🗞️ Perplexity Unveils 24/7 “Digital Worker” Concept Powered by Mac mini

Also, Citadel finds AI job-posting increasing, AI breaks into deep security research, specialists argue for smarter AI, OpenAI ships Codex to Windows, lawsuits target AI disruption

Read time: 8 min

📚 Browse past editions here.

( I publish this newletter daily. Noise-free, actionable, applied-AI developments only).

⚡In today’s Edition (12-March-2026):

🗞️ Perplexity Unveils 24/7 “Digital Worker” Concept Powered by Mac mini

🗞️ Stack Overflow published a blog “Why demand for code is infinite”

🗞️ Citadel Securities published this graph showing a strange phenomenon.

🗞️ Finding deep security holes in tough software was impossible for AI only 6 months back.

🗞️ AI Must Embrace Specialization via Superhuman Adaptable Intelligence

🗞️ Finally, OpenAI launched Codex for Windows to bring AI coding assistance directly to the native Windows desktop environment.

📢 Lawmakers are weaponizing civil lawsuits against AI companies that can replace licensed professionals.

🗞️ Claude seems to be fixing a super annoying developer problem.

🗞️ Perplexity Unveils 24/7 “Digital Worker” Concept Powered by Mac mini

This is very much OpenClaw but without the security vulnerabilities.

Perplexity expanded its AI agent ecosystem to turn local hardware and enterprise setups into autonomous systems that execute workflows across different applications.

You install their software on this Mac mini and leave it running 24 hours a day. The Mac mini acts as a dedicated local execution node. Perplexity’s cloud handles the complex reasoning and sends execution plans down to this client. The machine then uses local APIs to automate your applications and process files directly. This architecture leverages remote intelligence while keeping all proprietary data strictly local.

Only execution instructions travel the network, preventing data leakage. The system acts as a digital proxy that connects securely to local files or company databases to autonomously build models and run queries.

Users retain full control through a built-in kill switch and an audit trail that logs every action. Computer for Enterprise links directly into platforms like Snowflake to write and execute database queries on its own.

This enterprise version saved the company $1.6M by completing three years of work in four weeks. Comet Enterprise introduces a managed browser environment where administrators control exactly which websites the AI can access.

Developers get new APIs for multi-model agent routing and secure sandbox execution. Financial researchers can pull real-time data from 40 sources like FactSet and integrate Plaid for portfolio analytics.

Moving AI execution to a dedicated local machine solves major privacy blockers, but scaling this requires a simpler hardware setup than a standalone Mac mini.

Also check out this great post by Aravind Srinivas “Everything is Computer”

🗞️ Stack Overflow published a blog “Why demand for code is infinite”

Because teams can build so much faster now, companies are experiencing a “Cambrian explosion” of new AI apps and are frantically hunting for engineers to manage them. They say the current shift with AI, moves developers from manually typing every line of code to acting as AI orchestrators who manage intelligent agents.

Human imagination constantly finds new problems, so the demand for custom software remains practically infinite. Companies are now hiring for specialized positions like human-AI collaboration architects and domain-specific prompt engineers.

Junior developers can now skip basic syntax errors and contribute working features much faster. The core of programming is shifting from pure memorization to high-level system design.

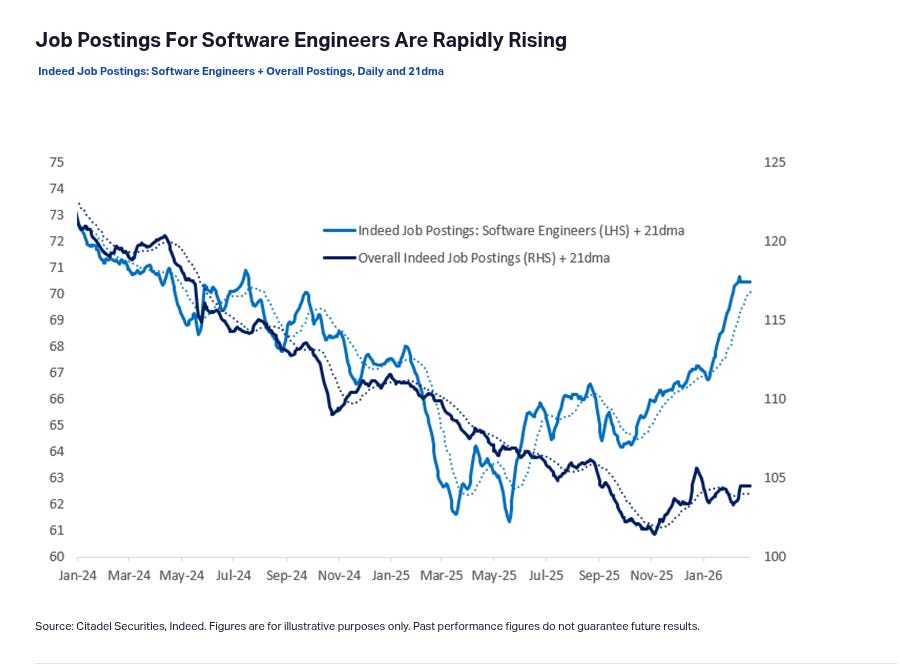

🗞️ Citadel Securities published this graph showing a strange phenomenon.

Job postings for software engineers are actually seeing a massive spike.

Classic example of the Jevons paradox. When AI makes coding cheaper, companies actually may need a lot more software engineers, not fewer.

When software is cheaper to build, companies naturally want to build a lot more of it. Businesses are now putting software into industries and tools where it was simply too expensive before.

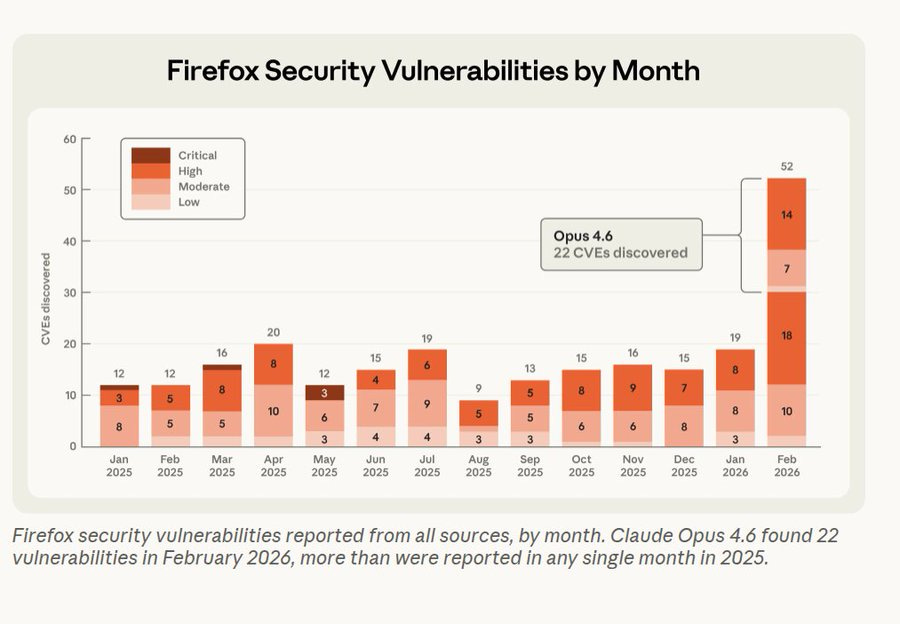

🗞️ Finding deep security holes in tough software was impossible for AI only 6 months back.

And now, AI is making it possible to detect those at highly accelerated speeds. Claude Opus 4.6 discovered 22 vulnerabilities over the course of two weeks.

Those specific bugs accounted for roughly 20% of all the major Firefox flaws repaired in 2025. Mozilla took action immediately and deployed fixes within hours.

The big thing is that Firefox is a very old project that has gone through decades of strict auditing and fuzzing. Fuzzing means bombarding the software with random inputs to cause failures.

Claude still managed to locate blind spots that all previous methods missed. It scanned 6000 C++ files using task verifiers, which are closed environments letting the AI test if its code triggers or fixes bugs.

This kind of scanning capability will soon reveal hidden bugs in all the software we rely on daily. Pairing language models with automated verification loops permanently changes defensive security economics.

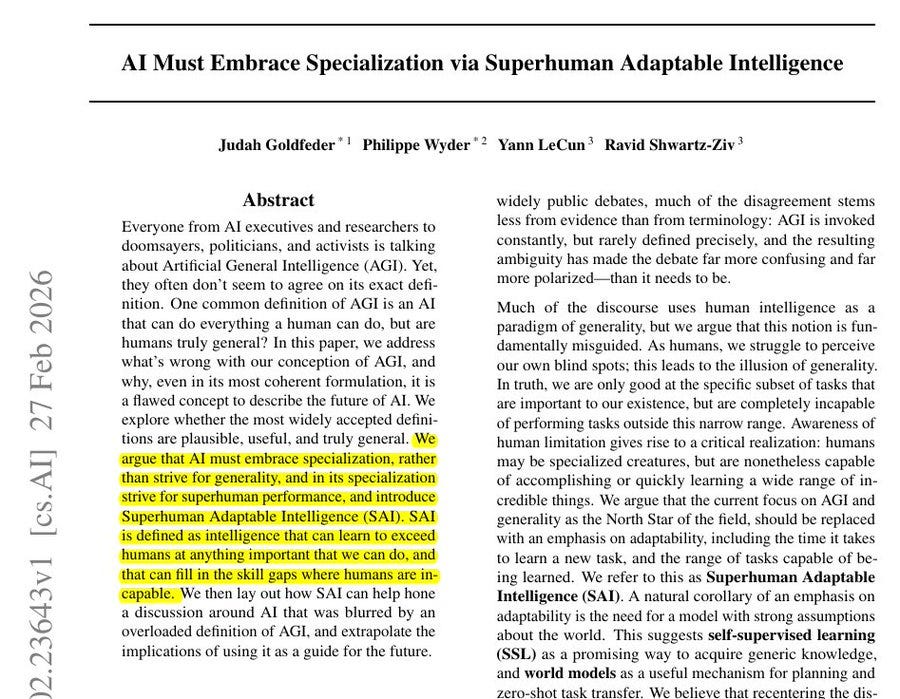

🗞️ Yann LeCun’s (ylecun) new paper along with other top researchers proposes a brilliant idea.

“AI Must Embrace Specialization via Superhuman Adaptable Intelligence”

Says that chasing general AI is a mistake and we must build superhuman adaptable specialists instead. The whole AI industry is obsessed with building machines that can do absolutely everything humans can do.

But this goal is fundamentally flawed because humans are actually highly specialized creatures optimized only for physical survival. Instead of trying to force one giant model to master every possible task from folding laundry to predicting protein structures, they suggest building expert systems that learn generic knowledge through self-supervised methods.

By using internal world models to understand how things work, these specialized systems can quickly adapt to solve complex problems that human brains simply cannot handle. This shift means we can stop wasting computing power on human traits and focus on building diverse tools that actually solve hard real-world problems.

So overall the researchers here propose a new target called Superhuman Adaptable Intelligence which focuses strictly on how fast a system learns new skills. The paper explicitly argues that evolution shaped human intelligence strictly as a specialized tool for physical survival.

The researchers state that nature optimized our brains specifically for tasks necessary to stay alive in the physical world. They explain that abilities like walking or seeing seem incredibly general to us only because they are absolutely critical for our existence.

The authors point out that humans are actually terrible at cognitive tasks outside this evolutionary comfort zone, like calculating massive mathematical probabilities. The study highlights how a chess grandmaster only looks intelligent compared to other humans, while modern computers easily crush those human limits.

This proves their central point that humanity suffers from an illusion of generality simply because we cannot perceive our own biological blind spots. They conclude that building machines to mimic this narrow human survival toolkit is a deeply flawed way to create advanced technology.

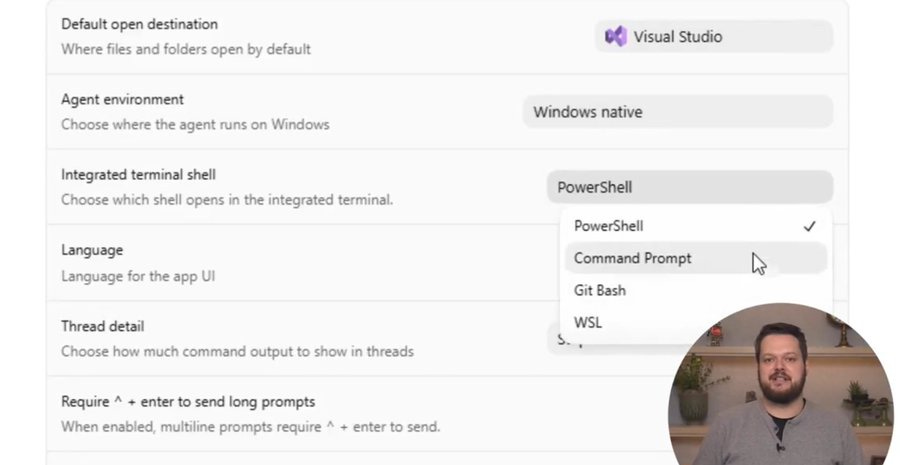

🗞️ Finally, OpenAI launched Codex for Windows to bring AI coding assistance directly to the native Windows desktop environment.

And also supprts Windows Subsystem for Linux (WSL)

So now:

• Work with multiple agents in parallel

• Manage long-running tasks

• Review diffs in one place

• Stay in your existing setup, without switching to WSL or VMs

To ensure safety, they built the first Windows-native agent sandbox, a secure zone where AI executes code without damaging the operating system.

It uses OS-level controls like restricted tokens to severely limit software permissions

uses filesystem Access Control Lists to define exactly which local files the program can modify.

Creates dedicated sandbox users, forcing the tool to operate as an isolated background account.

You can deploy multiple agents in parallel to solve different programming challenges simultaneously.

It autonomously manages long-running tasks, freeing up human workers for other priorities.

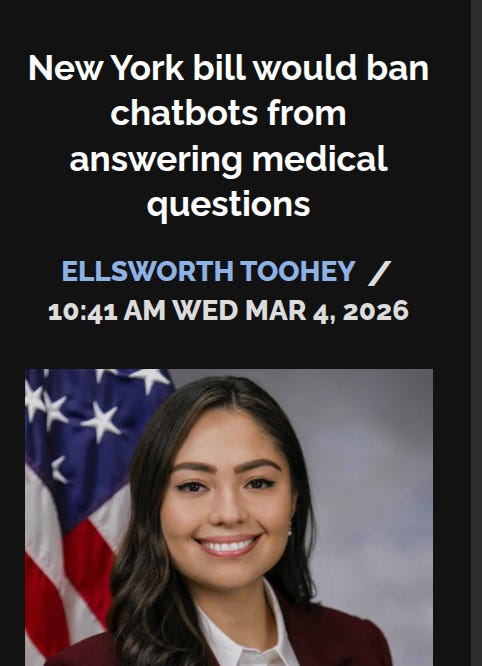

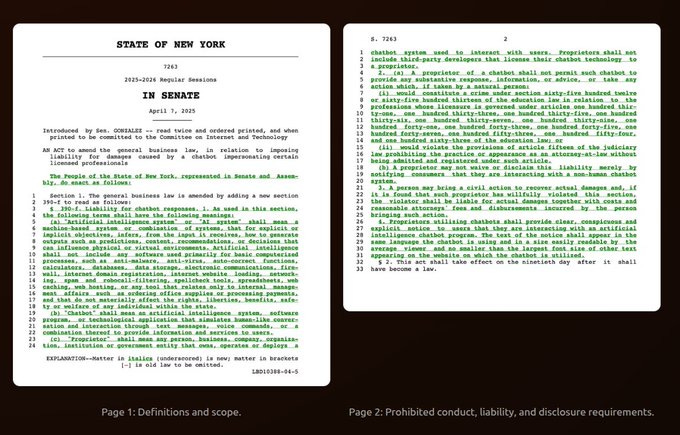

📢 Lawmakers are weaponizing civil lawsuits against AI companies that can replace licensed professionals.

Senate Bill S7263 targets automated systems that act like licensed professionals. The most critical feature is the private right of action which means everyday users can directly sue software owners for damages.

This specific legal mechanism makes the law stronger than relying on government fines. Thanks to the bill’s private right of action, users would be able bring civil lawsuits against chatbot owners, to recover damages and attorney’s fees. If passed, the law will take effect 90 days after being signed by the governor.

According to the bill, legal accountability sits with the deployer, ignoring the original model builder.

So OpenAI answers for ChatGPT, but a hospital for example, using an API for a diagnostic tool carries the liability for that product. Non-profit groups deploying tenant-focused chatbots have the same legal exposure as the biggest tech companies.

The legislation covers 14 different licensed fields like medicine and engineering. The text bans any software from giving a substantive response that normally requires a professional license.

The bill never actually defines what counts as a substantive response. A user asking a bot to translate medical jargon might accidentally trigger a lawsuit.

Developers cannot protect themselves just by adding a warning label. The law allows regular people to sue the developers directly and collect attorney fees.

This creates a massive financial incentive for lawyers to file repetitive lawsuits against small AI startups. Free tools helping low-income people understand eviction notices will likely shut down to avoid getting sued.

This regulation feels rushed and targets the wrong problem by punishing builders instead of improving the technology. The broad language will likely fail a constitutional challenge since it blocks basic educational speech.

🗞️ Claude seems to be fixing a super annoying developer problem.

Anthropic announced a research preview feature called Auto Mode for Claude Code, expected to roll out by March 12, 2026.

The idea is simple: let Claude automatically handle permission prompts during coding so developers don’t have to constantly approve every action.

Sstops those annoying permission prompts during long coding sessions. Before this, you had to use `--dangerously-skip-permissions` to work without interruptions.

That method worked fine but took away all your safety nets. This new auto mode gives us a smarter option. Claude will take care of the specific permission choices on its own while still blocking threats like prompt injections.

You can finally let long tasks run without watching your screen the whole time. Since it is still a research preview, you should run it inside isolated setups like sandboxes or containers for safety.

Expect a small jump in token usage and delay, because the model needs extra time to process the security checks. Once available, you just type `claude --enable-auto-mode` to start. If you manage a team and need people to manually approve actions, you can restrict this feature using Mobile Device Management tools like Jamf and Intune or through configuration files.

That’s a wrap for today, see you all tomorrow.