🗞️ Techie uses ChatGPT and AlphaFold to build DIY mRNA cancer vaccine, saves dog

FlashAttention-4 paper, latest Pew Research, Microsoft released GigaTIME, Stanford and Carnegie Mellon researchers mapped AI benchmarks to real jobs, Andrej Karpathy's new job-tracking tool

Read time: 10 min

📚 Browse past editions here.

( I publish this newletter daily. Noise-free, actionable, applied-AI developments only).

⚡In today’s Edition (16-March-2026):

🗞️ Australian uses ChatGPT and AlphaFold to treat his dog’s cancer. A new era of “citizen science” is beginning.

🗞️ Today’s Sponsor: If your AI agents keep retrieving the wrong context, explore HydraDB.

🗞️ FlashAttention-4 paper came out, to make AI run faster on the newest generation of computer chips.

🗞️ The latest Pew Research report reveals a massive gap between how everyday people and tech experts view AI.

🗞️ Microsoft released GigaTIME, a new AI model that turns cheap medical images into highly detailed protein maps of cancer cells.

🗞️ Stanford and Carnegie Mellon researchers mapped AI benchmarks to real jobs and found they heavily ignore actual human economic work.

🗞️ Andrej Karpathy just put out this tool that looks at AI’s impact on job.

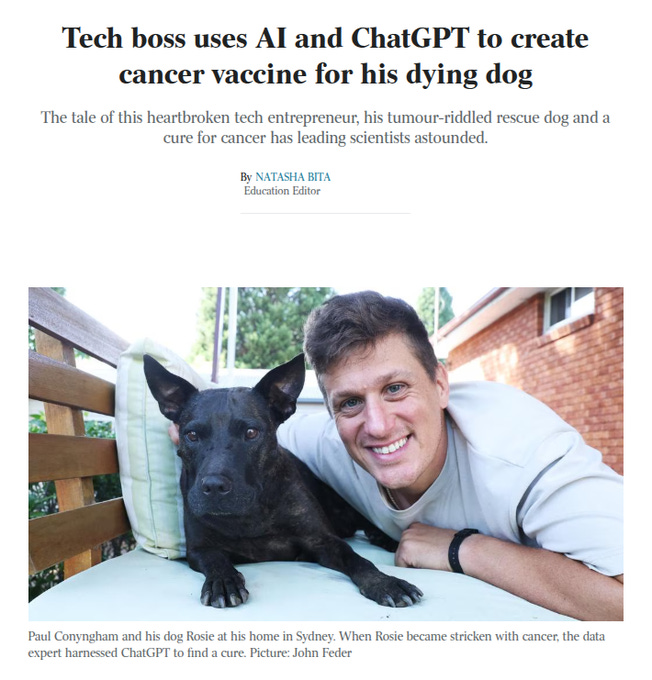

🗞️ Australian uses ChatGPT and AlphaFold to treat his dog’s cancer. A new era of “citizen science” is beginning.

He turned raw genetic data into a custom mRNA vaccine that shrank his dying dog’s tumor by 50%. Paul Conyngham spent $3000 to get the DNA sequences of his dog’s healthy blood and the cancerous tumor.

He was staring at gigabytes of raw genetic code without having any clue how to read biological data. This is exactly where ChatGPT became the crucial missing link in his process.

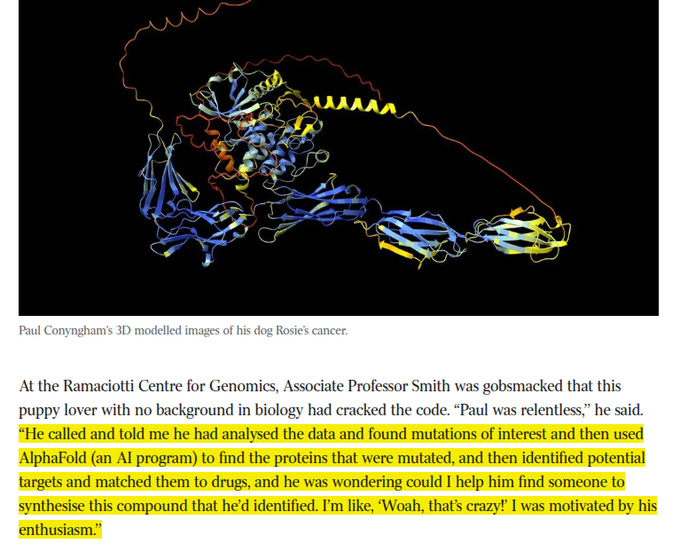

He used ChatGPT as a high-level biological consultant to figure out how to compare the two DNA samples and spot the exact mutations causing the cancer. ChatGPT gave him the step-by-step instructions to run the data pipelines and pointed him toward an AI tool called AlphaFold to map the physical shape of the damaged proteins.

The chatbot basically translated complex oncology concepts so he could write a half-page chemical recipe for an mRNA vaccine. This mRNA is just a genetic instruction manual that tells the immune system how to recognize and attack those specific mutated cancer cells.

University researchers were blown away by his formula and manufactured the physical vaccine for him. A veterinary expert then injected the dog, and within weeks the massive tumor had halved in size.

A leading university scientist was completely shocked that a data engineer with zero biology training successfully used AI to pinpoint his dog’s cancer mutations and design a specific chemical treatment.

The expert was so impressed by this solo achievement that he eagerly agreed to help him physically manufacture the drug.

🗞️ Today’s Sponsor: If your AI agents keep retrieving the wrong context, explore HydraDB.

HydraDB is production-grade infrastructure designed to solve the “memory fragmentation” that plagues standard RAG systems.

And they just raised $6.5M to kill vector databases.

While traditional setups store disconnected text snippets, HydraDB utilizes a Sliding Window Inference Pipeline to resolve pronouns and context before storage, ensuring every data “chunk” remains semantically complete.

The core innovation is its Git-Style Versioned Temporal Graph. Instead of overwriting old data, it treats user preferences and facts like a version-controlled codebase. This allows agents to track when and why information changed, providing a chronological map of truth rather than a flat list of text.

By combining standard vector search with this temporal graph, HydraDB achieves 90.23% context recall and sub-20ms latency. For developers, it means moving away from brittle, manual context management toward a standardized foundation that allows 10,000+ agents to operate with a shared, reliable understanding of state. It isn’t just a database; it’s the persistent memory layer that makes long-term AI agents viable in production.

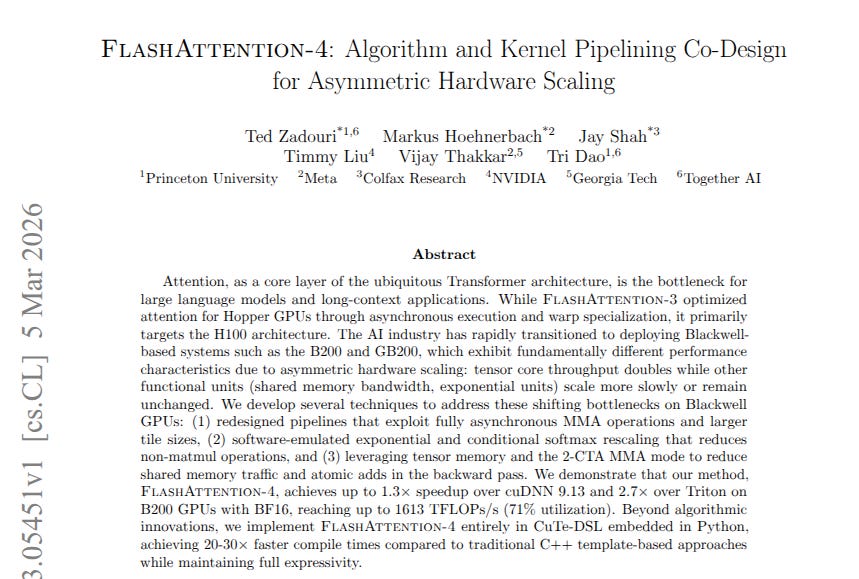

🗞️ The paper introduces FlashAttention-4 to make AI run faster on the newest generation of computer chips.

FlashAttention-4: Algorithm and Kernel Pipelining Co-Design for Asymmetric Hardware Scaling.

Researchers from Princeton University, Meta, NVIDIA, and more have developed clever new pipelines, re-engineered core computations, and optimized memory usage to master the unique scaling challenges of NVIDIA’s latest Blackwell GPUs. While the math processors inside new Blackwell GPUs doubled in speed, the memory system stayed the same, creating a massive traffic jam.

This means the expensive chip naturally spends most of its time doing absolutely nothing while waiting for data to move around. i.e. softmax and shared-memory traffic are what slow things down, so the new code is built specifically to fix those issues. And with this new FlashAttention-4, the B200 hits 1600 TFLOPs/s, outperforming both cuDNN and Triton by solving the actual hardware limits.

----

The researchers solved this by writing a completely new schedule that runs the math computation and the memory loading at the exact same time, while also shifting some specific calculations to entirely unused parts of the chip. This prevents the hardware from sitting idle, making the AI run much faster and saving massive amounts of time and electricity for anyone running a large language model.

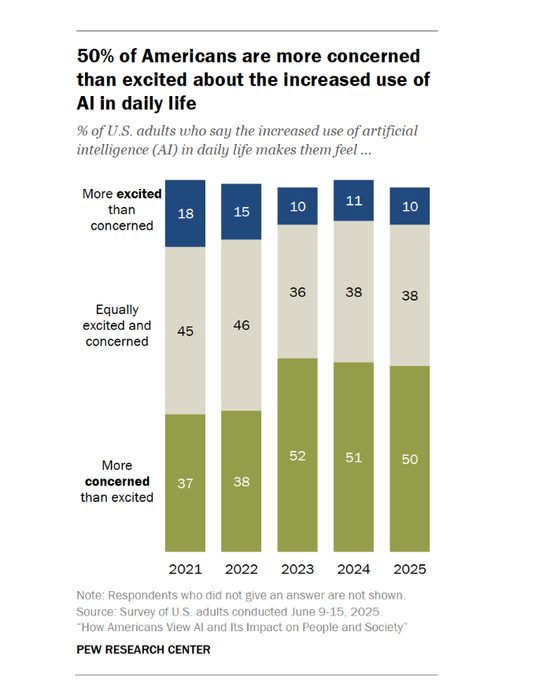

🗞️ The latest Pew Research report reveals a massive gap between how everyday people and tech experts view AI.

50% of Americans are more concerned than excited about AI.

The most shocking detail is that teenagers are widely using these tools to cheat on their homework.

Roughly 60% of students between the ages of 13 and 17 say their classmates use chatbots to bypass schoolwork. Nearly 33% of those teenagers report that this kind of digital cheating happens extremely often in their classrooms.

Only 24% of adults believe AI will actually make education better over the next 20 years.

Workplace adoption is climbing, with 21% of adults using AI on the job.

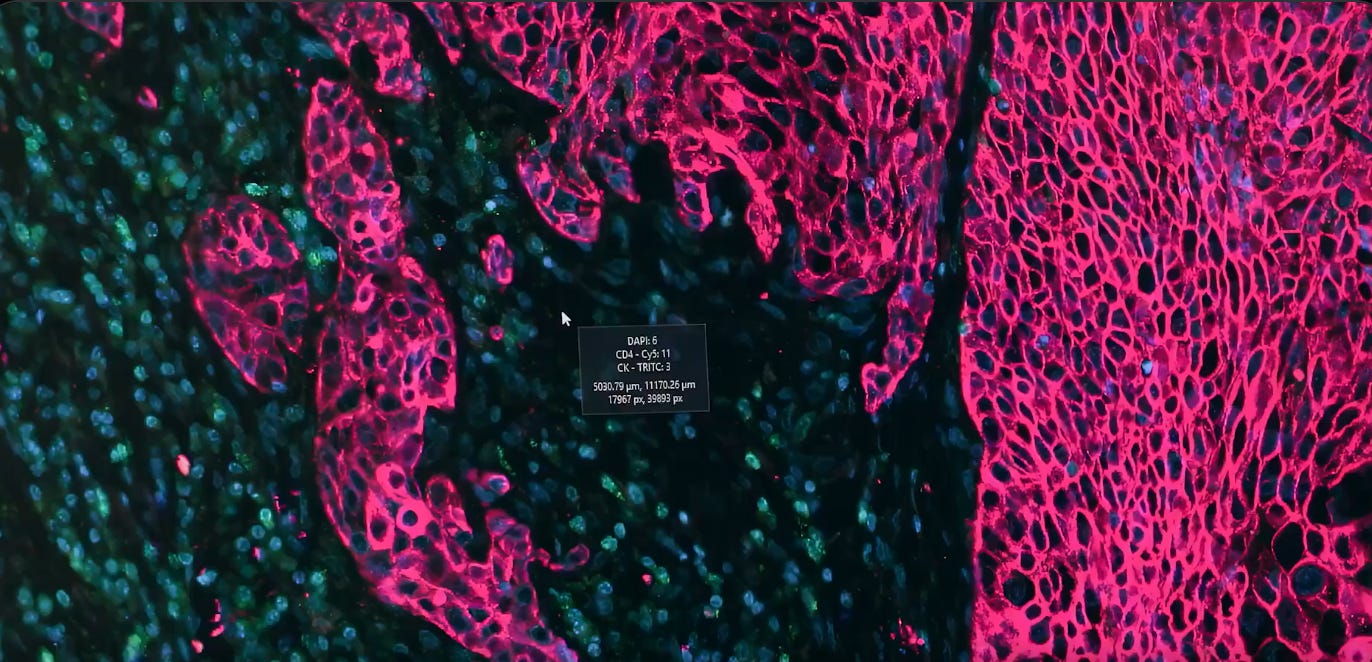

🗞️ Microsoft released GigaTIME, a new AI model that turns cheap medical images into highly detailed protein maps of cancer cells.

Standard tumor tissue slides cost only $5 to $10. But doing that chemical protein test on a physical tissue sample, that map out how a tumor fights the immune system, costs thousands of dollars because the materials and the imaging process are very difficult to scale.

The GigaTIME AI looks at the basic shapes in the cheap digital image and predicts exactly where those hidden proteins are located. This means researchers can use software to mathematically simulate the expensive chemical test just by analyzing the cheap image.

i.e. GigaTIME has practically turned a medical test that normally costs over $2,000 per patient into a fast digital prediction based on a standard $5 hospital image. Shows again that software can bypass hardware limits to find entirely new biological patterns.

Microsoft trained GigaTIME on 40M cells to generate these complex virtual test results directly from the cheap slides. Processing 14,256 patients yielded a massive database of 300,000 detailed medical images. They discovered 1,234 new connections between specific cell proteins and patient survival rates.

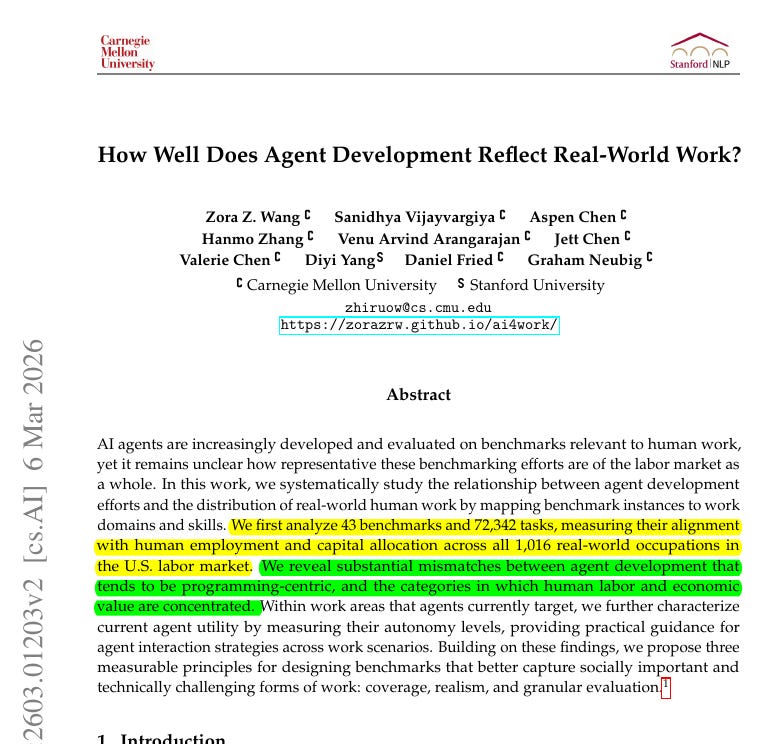

🗞️ How Well Does Agent Development Reflect Real-World Work?

They found that AI tests focus almost exclusively on programming and math, which only make up 7.6% of actual jobs. To test this, the team analyzed 43 benchmarks and over 72,000 tasks against a massive government occupational database.

The authors discovered that developers focus almost entirely on building agents for software engineering because it offers easy automatic grading. Highly digitized and valuable fields like management and legal work represent a massive part of the economy but get almost zero attention.

Furthermore, benchmark tasks usually require simple information gathering while completely ignoring the complex interpersonal skills needed in real workplaces. i.e. they says current AI agent progress-benchmarks are fundamentally disconnected from the actual high-value tasks that drive the modern labor market.

🗞️ Andrej Karpathy just put out this tool that looks at AI’s impact on job.

He also deleted the original Github repo very quickly.

Basically, he pulled 342 job types from the Bureau of Labor Statistics and had an LLM score each one from 0 to 10 based on AI exposure. The average exposure score is 5.3. Move the score, move the probability it will get wiped out by AI.

Software developers 9/10,

medical transcriptionists are a 10/10.

Lawyers 8/10

General Office clerks 9/10

Basically any screen-based jobs are in trouble. $3.7T annual wages in high-exposure jobs (7+) pre-computed as ∑(BLS employment count × BLS median annual wage) over exactly those occupations whose Gemini Flash score is ≥7.

That’s a wrap for today, see you all tomorrow.

Hmmm… https://decrypt.co/361303/chatgpt-cure-dogs-cancer-complicated